Learning:

Sane Data Updates Are Harder than You Think: Part 2

Second in a three-part series by Adrian Holovaty about hairy data-parsing problems from a journalist’s perspecti

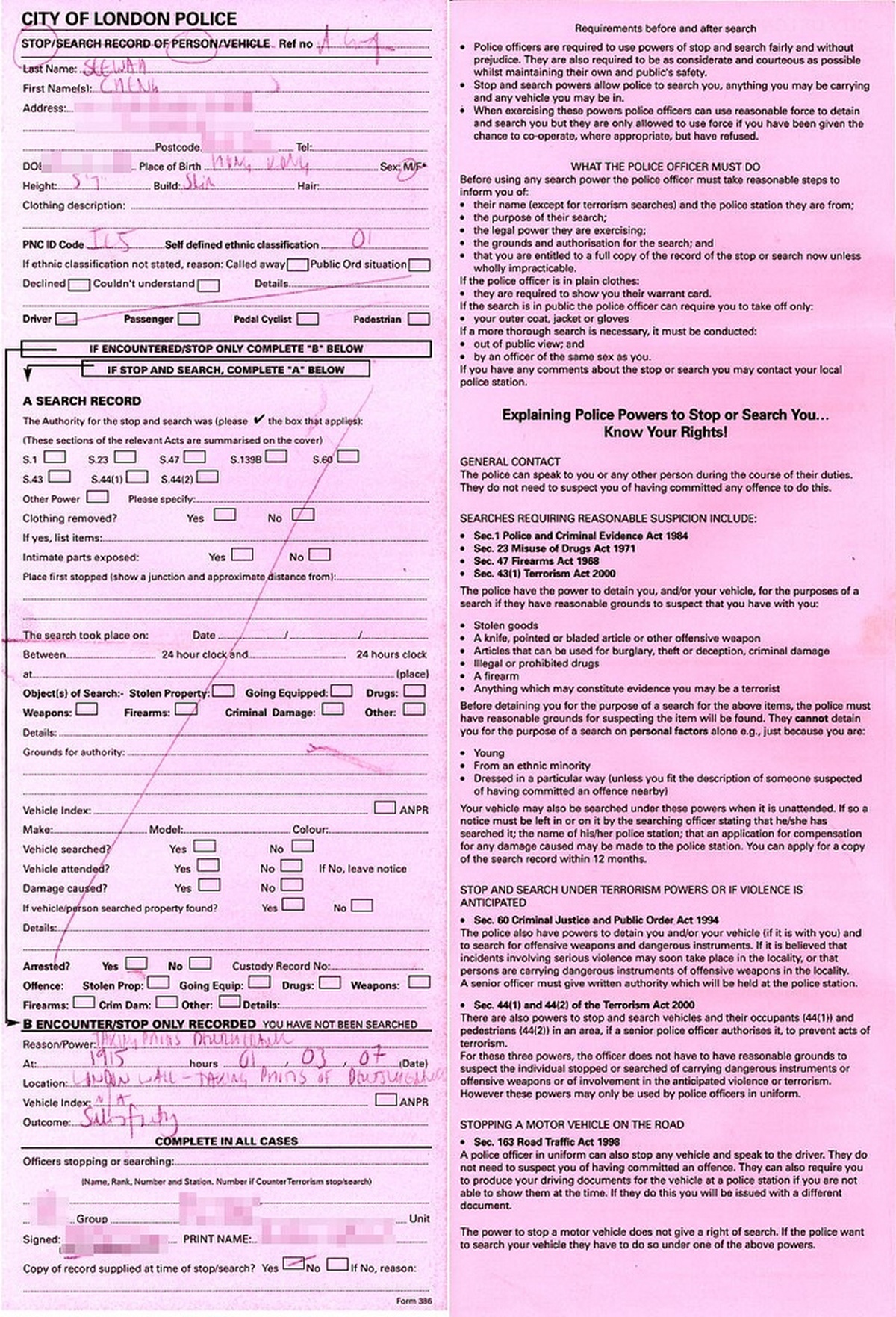

Government data the hard way (See Wah via Flickr)

Welcome to part two of the “hairy data-parsing problems” series! This is a three-part series with battle-scarred advice on how to manage data you get from public records and other databases journalists use. Whether you’re using that data to make a publicly visible mashup, or gather data to write a story on it, I hope you’ll find these tips useful.

In part one, I introduced the series and focused on how to crawl a database to detect records that are new or changed (i.e., find records that differ from records you’ve crawled in the past).

In this, part two, I’ll assume you’ve identified some new/changed records. The next step is to reconcile these records with your existing data. The key question I’ll answer is: given a record from your data source, how can you either find that record in your old data (so you can update it) or be confident that it doesn’t exist in your old data (so you can add it)?

Here’s the pseudocode for the whole data-update process that I provided in part one:

Get all records that are new or changed since your last import

For each record:

Find the record in your old data

If you didn't find it:

Add it

If you found it:

Change whatever bits of it are different

For all your existing data:

If it wasn't in the source data any longer:

Delete it

In part one, I covered the first bold line. In this article, I’ll cover the second bold line.

Primary Keys

If your data source is good, it’ll include a primary key for each record. This is a unique number, or set of characters, that identifies a record and doesn’t change if the underlying record’s fields change.

For example, say you’re regularly scraping some crime data, and you get a record with these attributes:

- ID: 633792

- Location: 800 S. Halsted

- Date: January 13

- Crime: Ruby programming while intoxicated

Then, say you scrape the data again a day later, and this time the data source includes this record:

- ID: 633792

- Location: 800 N. Halsted

- Date: January 13

- Crime: Ruby programming

Looks like the cops made a few errors in their initial report. The crime happened at 800 North Halsted, not South Halsted—and the crime wasn’t to program Ruby while intoxicated, it was to program Ruby in general.

Fortunately, even though a few data attributes changed, the ID (the primary key) remained the same. And that illustrates the purpose of primary keys: whatever data attributes change, however often they change, the primary key remains constant. Throughout the thick-and-thin of data changes, the primary key is a consistent beacon, something you can rely on.

So if your data source has primary keys, it’s very easy to find a record in your old data. Just search your old data by primary key.

Or, Not

But this is not Database Theory 101 class, and you probably already knew what a primary key is, and this series is not titled “beautiful, happy data sets.”

In the real world, there is no guarantee that a primary key in your data source is actually a primary key. It may look like one, the documentation may say it is one, but that can’t be trusted.

Specifically, in my work, I’ve seen three types of primary-key errors:

- Multiple (distinct) records with the same “primary key.” This means it’s not actually a primary key. Ahem.

- A primary key genuinely being unique but getting recycled when records are deleted. (Laughing? No less than SQLite does this. Try inserting a row in a SQLite table with an auto-incrementing ID. Check the ID, then delete the row, then insert another row. The ID will be the same both times.)

- A record changing its primary key because it had originally been typed in by hand and was later corrected. Happens more often than you might think, in cases where paper records are converted to digital data through imperfect processes.

How to deal with this insanity? Let me give you some tips. First, start with a gut check.

The Initial Gut-Check

You can save yourself future headaches by doing a quick analysis on the “primary key” field the first time you import a data set (i.e., before you have to worry about updating it). Just import all of the data, then run a query to calculate how many unique values there are in the “primary key” field. That would be something like “SELECT COUNT(DISTINCT(id)) FROM mytable” if you’re using SQL.

If that number is less than the number of rows in the data, you know right away that you’re dealing with funny business. Don’t trust that field, and begin using air quotes around the phrase “primary key” each time you describe the data to colleagues.

If that number is equal to the number of rows in the data, congratulations—now you should be exactly one percent more confident that the primary keys are actually unique. You still might get burned.

So how do you build a system that catches buggy primary keys? The key principle is: trust but verify. Specifically, you can add a fail-safe to your data-update process—a bit of code that identifies suspicious data changes and notifies you if something looks fishy.

Fail-Safe 1: Check Number of Changed Fields

Here’s one way to do that. When you run your data-update script, use the primary key to look up the record in your existing data. If it’s there, do a field-by-field comparison to see whether any of the fields have changed. If too many fields have changed, that’s a signal that the primary key might not be trustworthy—and a human should be notified to look into it. (Which human? That’s an organizational question. Maybe you, maybe somebody else on your staff.)

In pseudocode:

For each record:

Look up the record in your old data, using the primary key

If an old record exists:

number_of_changed_fields = 0

For each field in the old record:

If the field's value is different:

Increment number_of_changed_fields

If number_of_changed_fields >= CHANGE_TOLERANCE:

Alert a human

Don't update the record

Else:

Update the record

Else:

Add the record

The CHANGE_TOLERANCE depends on your data, your application and your judgment. If your data set has 15 fields, and all 15 of them change while the primary key stays the same for a given record, that’s a strong indicator that the primary key for that given record might be buggy. If your data has 15 fields and only one of them changes, that’s a strong indicator that the primary key is sound.

Sometimes ambiguous situations happen. Say your data has two fields and both of them change, while the primary key stays the same. In this case, 100% of the data has changed (other than the primary key), but there are only two fields, so that’s not necessarily unreasonable. What you do there depends on the application. For a simple “alert people when there’s something new” service, that might be OK; for a hard-hitting investigative piece that demands accurate data, it should be checked out.

Fail-Safe 2: Check Weighted Fields

In most data sets, certain fields are more important than others. For example, in a crime data set, the crime type and location are more important than the name of the responding police officer. (No offense to my brother-in-law, who is a police officer. Dan, you are awesome.)

You can use this relative importance to improve your fail-safe checking. Say your data set has 20 fields: five important ones and 15 unimportant ones. Say you run your updater and find a row in which all 15 of the unimportant fields have changed values, but the five important ones have stayed the same. If you were using a strict “percentage of fields changed” strategy to notify a human about odd updates, that would have triggered it. But in this case, if the five important fields didn’t change, and the primary key was the same, it’s probably OK. On the other hand, if a record came in with all five important fields changed and none of the 15 unimportant ones changed, that’s a clue that the record’s primary key might be bogus.

Fail-Safe 3: Catch Duplicate Primary Keys

Finally, a third fail-safe, and the simplest of the three, is to catch duplicate primary keys. It’s easy to do: just keep track of every primary key you’ve seen in this particular data import process, and if you see a duplicate, notify a human. There’s no excuse not to do this every time you import data — it’s trivial code to write. Pseudocode:

keys_seen = []

For each record:

If record's key is in keys_seen:

Notify human!

Else:

Add record's key to keys_seen

Process record

Human Intervention

What should a human do when he or she is alerted to some funny-looking data? The answer, I’m afraid, is “it depends.”

Maybe your data source offers a web version of the data that would shed some light on the issue—check that for clues. Maybe you should mark that particular record with an asterisk, in cases where you’re republishing the data. Maybe (in case of critical data) you should call the data provider and just talk to a human.

The most important thing is: do something.

What to Do When there Is No Primary Key

The techniques above all assumed that your data set has a primary key field, trustworthy or not. But some data sets don’t have primary keys. How do you reconcile these records with your existing data?

The only solution, apart from lobbying your data provider to add a primary key field to their published data (which sometimes works!), is to use natural key. This is when you use some other field, or combination of fields, as a “proxy” to primary key.

Let’s consider an example, again using crime data. Say your crime data set has no primary keys, but you do have each crime’s date, time and police officer badge number. It’s likely impossible for a police officer to be at multiple places at the same date/time, so there’s a good chance that the combination of badge number and date/time will uniquely identify a crime.

Of course, this thinking breaks down if you consider a police officer might have caught *two* criminals at the same time, in which case two records would have been created—both with the same date/time and badge number. So that combination of fields is not a perfect proxy to primary key, and furthermore, this illustrates the difficulty (some might say impossibility) of this “natural key” technique.

Another problem with this technique is data updates. Say you’re relying on date/time and badge number as your natural key. What happens when one of those two fields changes—say, your data source fixes a typo in a badge number, or in the date/time? Your system will interpret it as a brand new record, rather than an update to an existing record, so you’ll have two records in your data: a correct one and an outdated, incorrect one.

Those are two big problems with natural keys: the fields that constitute the natural key might change, and even an unchanging natural key might not uniquely identify a record.

So, it follows that, if you’re in a situation where you’re forced to use natural keys, you should take care to reduce the risk of both of those problems. (And you’ll need to be OK with the fact that you will eventually have inaccurate data, whatever you do.)

How to reduce the risk? In forming your natural key, choose fields that have the least likeliness of changing. This can require deep domain knowledge—not only of the nature of the data (can a police officer be in more than one place at the same time?) but also of the nature of the *collection* of the data (is it initially recorded on paper, then digitized?). The more you know about your source’s data-collection process, and the more cynical a thinker you are, the better. To learn how your source collects data, call them on the phone and ask.

Also, use the “catch duplicate primary keys” fail-safe I described above to catch duplicate natural keys. If you import a few thousand records with natural keys, without finding duplicates, that’s a good sign your natural keys are reasonable.

Logistics of Creating a Natural Key

If you use a natural key, you’ll have to answer the tactical question of, “Do I create a separate field in my database that stores the natural key, or do I just do a multi-field search whenever I check for existing records?” Let me explain.

In the first case, you would create a field in your database to hold the natural key, and the values would be a string concatenation of the individual field values. So, if your natural key consists of two fields—a building ID 49304 and a permit ID 8810—then the natural key might be “49304-8810”.

If you use this technique, insert a character between the field values to prevent ambiguity. The character should be one that can *not* appear as a field value. In the example above, I added a hyphen between the building ID and permit ID. Without this, the natural key for “building ID 49304, permit ID 8810” would be identical to the natural key for “building ID 4930, permit ID 48810.”

Another option is to avoid creating a separate natural key field and simply do a multiple-field lookup each time you want to search by natural key. So, in the building/permit ID example, you’d do something like “SELECT * FROM mytable WHERE building_id=49304 AND permit_id=8810;”. An important thing to note here is that your data must remain exactly the same as in your source — that is, don’t massage it in any way (e.g., by converting strings to title case). Otherwise you’re changing your natural key!

Finally, note that dates and times can cause problems as natural keys, due to time zone and daylight savings time issues. Depending on the database you’re using, your data might be converted into your local time zone upon insert (or upon selection), in which case you should take care that the values in your database are consistent with what’s in your source.

Until Next Time!

That’s it for part two. In the next part, I’ll cover the next step of the process—techniques for changing data attributes that are different across data updates.

People

Credits

-

Adrian Holovaty

Adrian Holovaty has been doing data journalism on the web since 2002. He cocreated Django and founded EveryBlock. Now he’s building Soundslice.