Features:

Do News Bots Dream of Electric Sheep?

What are we even talking about when we talk about bots?

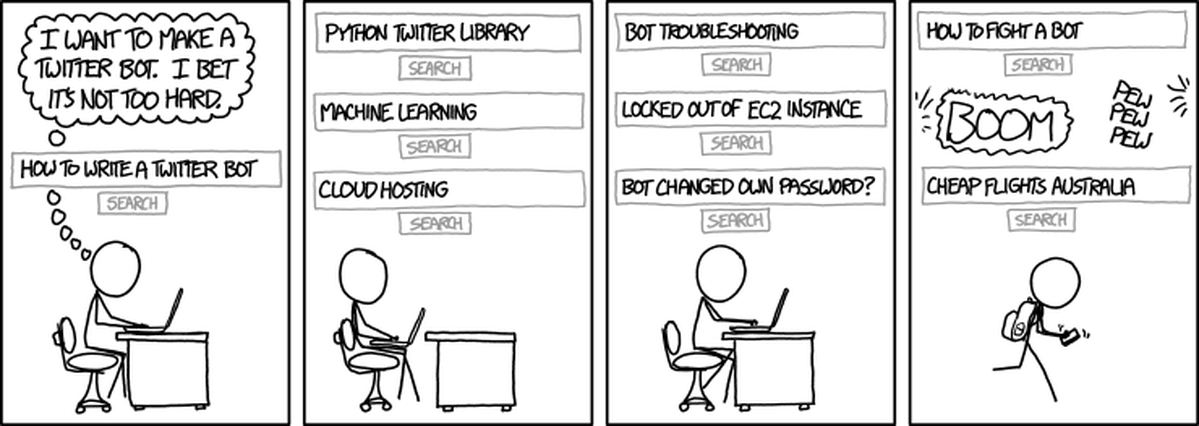

(xkcd)

Bots have been making the news more and more lately, partly due to the underlying technology becoming more common, and partly due to bots becoming rampaging racists. PCWorld recently suggested that 2016 may be “the year of the bots.” But if you read the article, all the examples are of chatbots—bots, to be sure, but only a subset.

What Is This Thing Called Bot?

A bot—to use the broadest sense—is merely “an agent that does an automated process,” said Alexis Lloyd, who creates bots and other cutting edge projects at the New York Times Research & Development Lab.

But if a “bot” is simply a computer program with an automated function, doesn’t that make everything from TweetDeck to your spam filter a bot? “That’s where it gets fuzzy,” Lloyd said.

She and a dozen other bot experts wrote a “botifesto” earlier this year, challenging readers to think critically about programs they write that could take on unintended consequences.

“One distinguishing feature of bots is that they are semi-autonomous: they exhibit behavior that is partially a function of the intentions that a programmer builds into them, and partially a function of algorithms and machine learning abilities that respond to a plenitude of inputs,” the botifesto read.

For Lloyd and other journalist botmakers, there’s a spectrum of “bots,” ranging from the simplest automation to the most complex artificial intelligence. The more a bot is able to make its own inferences and decisions, the farther it moves up the spectrum toward true AI.

Automation vs. Intelligence

Justin Myers works on a team at the Associated Press that has the express goal of automating similar reports, like quarterly earnings. “I would not go so far as to say ‘AI’ for anything that I’m doing and don’t expect to for a very long time," he said. “I tend to just use ‘automation.’”

In his mind, AI is a computer program with less human supervision—as in, you could run it and what it does could surprise you.

Take Tay. Microsoft’s disastrous chatbot went from imitating a teenage girl to imitating a Nazi extremist in less than a day. Tay was a Twitter bot—a piece of code that inputs and outputs tweets. And yet, everyone from The Guardian to TechCrunch was referring to Tay as an “AI bot." At what point does a Twitter bot—a technology so simple, it’s used to teach kids to code—qualify as “Artificial Intelligence”?

Microsoft hasn’t shared details on how its team made Tay, but it may have involved tools like machine learning and natural language processing. Myers noted that even though the bot interacted with users on Twitter, that doesn’t necessarily mean it’s a simple Twitter bot.

“I think I would make the distinction between the content and how it’s created versus the interface," he said.

Meredith Broussard, one of the authors of the Vice botifiesto, published a study last year called “AI in Investigative Reporting.” She agreed that there’s both overlap and confusion between terms like “automation” and “AI.”

For her own purposes, she said she thinks of a bot as akin to an IF/THEN statement, unlike the more complex AI programming. “Behind the scenes, everything that a bot does can be converted into math," she said.

Tay may have used NLP and machine learning—we don’t know for sure, since Microsoft hasn’t released its code. Those are part of a class of computer programs that many people refer to as “AI,” Broussard said. It follows, then, that a program utilizing those tools would be an AI program.

But if there’s one thing the experts can agree on, it’s that no one can agree on how to define these things. Lloyd, at NYTLabs, said some data scientists don’t want to refer to machine learning and natural language processing as AI.

Lloyd’s definition for AI, she said, is if the computer has a set of processes or rubrics by which it can make its own decisions. It has a framework, essentially, to make decisions it wasn’t explicitly programmed to do.

Robot Terrors

When I was working as a reporter on Reuters’ data team, I automated some of the articles I was writing. The reports were routine, repetitious and data-heavy: the perfect kind of task to pawn off on a computer.

I was chatting with a colleague, who had been covering this issue for years, and made an offhand joke about replacing our team with robots. But it wasn’t a bot, really—I was just making conversation. All I’d done was write a computer program, inside our CMS, that autocompleted sentences based on data from Reuters’ Eikon program, which updated automatically. If I hadn’t been joking with the reporter, I wouldn’t even have referred to it as a robot.

Myers shared a funny story he’d heard from colleagues at the AP: more than once, a visitor had come to their headquarters in New York, curious about the automation team. “People have asked to see the robot,” he said.

It’s funny to think of a human-looking robot, sitting patiently at a desk at the AP and typing away at quarterly earnings reports, and yet, that’s the kind of real confusion that gets created by throwing these terms around.

Myers said he’d like to see more transparency and precision in how journalists refer to their bots—or, automation or artificial intelligence programs. While there appears to be a spectrum of complexity, ranging from simple automation to intense neural networking programs, the points along that spectrum have yet to be nailed down.

“There still needs to be a lot more transparency,” Myers said, “(We’re) in a business where precision of language is really important.”

Credits

-

Samantha Sunne

Samantha Sunne is a freelancer working on data and investigative stories. She also works with Hacks/Hackers, runs a newsletter called Tools for Reporters and is a perpetual fixture in New Orleans bars and coffee shops.