Features:

How We Tracked Cable News Chyrons

Our quick-and-dirty app for scraping and juxtaposing TV commentary in real time

The original Chiron was Achilles’ tutor. His lower third is mostly horse.

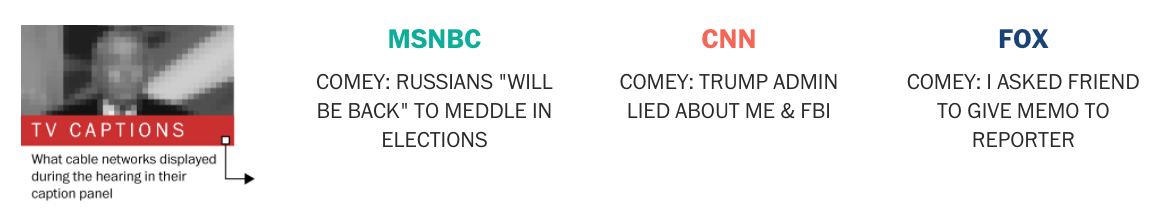

Reporting on media bias and the bubbles it creates is nothing new. But last week’s Senate Intelligence Committee hearing provided a rare opportunity to explore a new angle. CNN, MSNBC, and Fox News all aired former FBI director James Comey’s testimony live and uninterrupted. The graphics team at The Washington Post tracked what each network displayed in its lower third caption panel—also called a chyron—and showed it to readers as the hearing unfolded. (You can see the finished piece here.)

I dropped the idea of tracking cable news lower thirds into our #graphics-pitches Slack room, and we all agreed the concept was interesting but it might be difficult to tell a story. But the more my next-desk-neighbor Samuel Granados and I thought about it, the stronger we felt the idea was. If we could juxtapose what different networks were saying about the same event in real time, it would expose—in an immediate and concrete way—what networks felt was the most important news out of the hearing. Even if it just confirmed what we’ve experienced anecdotally when flipping between channels, we would have hard data to back up or challenge our impressions. The concept of comparing three channels at once felt compelling. All we had to do now was make it.

Screenshot of the final piece

Breaking Down the Technical Problems

Finding a way to “scrape” the text off of cable news was an intimidating task. But by splitting up the problem into smaller, easier steps, I was able to get a basic scraper working. Those steps were:

- Take a screenshot of the news coverage.

- Crop that image so that just the lower third of on-screen text remains

- Run an optical character recognition (OCR) program on the image, and save the resulting text somewhere. I used tesseract for this.

- Repeat.

None of these were too difficult separately, so combining them into a single script was totally doable. (It seems half of my job is finding interesting reasons to combine code others have written—a testament to the strong state of open source software.)

Did you know OS X has a built-in command for capturing screenshots? I didn’t.

def take_screenshot(window_id):

f = tempfile.NamedTemporaryFile(suffix=‘.png’, prefix=’imagemagick_’)

filename = f.name

# The -l flag is undocumented. Specify a window id to capture just that

# window.

# https://apple.stackexchange.com/questions/56561/how-do-i-find-the-windowid-to-pass-to-screencapture-l

command = 'screencapture -o -x -l %s %s' % (window_id, filename)

run_shell_command(command)

image = Image.open(filename)

return image

def get_current_chyron_text(window_id, network):

screenshot = take_screenshot(window_id)

tv = screenshot.crop(box=SCREEN_TO_TV_BOX)

chyron = tv.crop(box=CHYRON_BOXES.get(network))

text = pytesseract.image_to_string(chyron)

return text

The raw results were good but not without error. The OCR often mistook capital Os for zeros, and had trouble with MSNBC’s font. And sometimes the lower-third of the screen contained the name and title of the person on screen, details we felt were irrelevant for our goal. But luckily for us, the goal wasn’t to build a self-sustaining tool to set loose and admire—it just had to work for a few hours.

# Run forever

while True:

for i in range(len(window_ids)):

window_id = window_ids[i]

network = NETWORK_OPEN_ORDER[i]

text = get_current_chyron_text(window_id, network)

if text and text != lastText[network]:

timestamp = get_timestamp()

writerow_google_sheet([timestamp, network, text, 'FALSE'])

lastText[network] = text

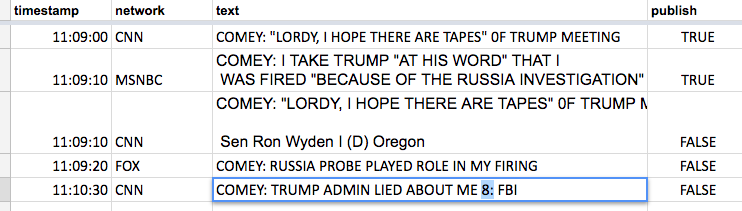

The final version of the “scraping” script added the raw OCRed text to a new row in a Google Sheet, where colleagues Kim Soffen and Kevin Uhrmacher were waiting to fix any errors. (In addition to being phenomenal graphics reporters, they’re really good at English—far better than my computer is. Let the robots do what they’re good at, and the humans likewise.)

Screenshot of the Google Sheet

A separate script downloaded this Google Sheet, looped through the results and sent the ones marked “Publish” to our Amazon S3 server.

On the front-end side, Samuel Granados created a design that focused on the live experience but also offered the complete history of lower third text for those interested. We implemented it as a React app that requested the data and updated itself every 30 seconds.

What Worked Well, and What Didn’t

The Google Sheets component was a last-minute addition. Originally the script just wrote to a local .csv file. I figured I’d be able to handle the edits myself. I was wrong. Put simply, the piece wouldn’t have worked without Google’s collaborative editing feature. Kim and Kevin were able to both be fixing the OCR errors as they came in, and I was able to focus on making sure nothing melted down.

Our graphics team has been using collaborative editing much more often lately for text-heavy graphics (thank you, NYTimes friends for ArchieML). Collaborative editing was essential for our odd use-case of needing to clean data real-time. Next time, I’d plan to use Google Sheets for editing from the beginning.

We saved a copy of the raw data. You might think it’s useless given the edited version in Google Sheets was corrected, but eventually something will go wrong, and you’ll need to refer back to the original. I did this by having the script write .csv data to stdout, and redirecting that output to append a .csv file:

python bin/scrape.py >> raw-comey-hearing.csvBy redirecting the output with

>>, I could safely stop and restart the script without overwriting the resulting file. (Redirecting a process’s output with two>>characters adds the output to the end of an existing file, while a single>character would have overritten the existing file instead.)Keep separate tasks separate. When developing, assume each other piece in the system works. When building the script to publish new data, just assume the Google Sheet will be filled out somehow. When building the React app, assume the latest data will always live at the specified URL. By modularizing these tasks, you free your brain to concentrate on just one step at a time.

I wish I had done more automatic editing to the raw OCR results. We ended up changing many C0MEY’s to COMEY, for example. That could have been pretty easily automated to save time.

Chyrons sometimes contain multiple levels of text. CNN used an uppercase, traditional lower-third above a lowercase sentence further explaining a situation. They often used the same area to identify people on screen.

I would have liked to account for these different kinds of text.

Where From Here

Our project was an experiment, but the positive reaction shows that people are interested in better understanding and comparing the coverage choices news organizations make. Lower-third text data is a small subset of this, but it’s particularly interesting: The text is updated far more often than headlines are online, and so much of the American public gets their news from cable television. I think the open source news community would do well to find ways to make this information more readily available.

Pratheek Rebala’s @CNNChyronBot is a fantastic start. (I really can’t believe I didn’t find out about it until halfway through my piece.) His project tweets each time CNN changes its lower third text. It’s quite accurate and handles cases I didn’t have to (commercial breaks, for example). It’s really impressive work. If you’re interested in this sort of data, I’d follow his example.

Credits

-

Kevin Schaul

Kevin Schaul

Kevin Schaul is a graphics editor at the Washington Post. He graduated from the University of Minnesota with a degree in computer science, though all his professional work has been in newsrooms. He grew up in the Windy City suburbs of Gurnee and Lake Forest, where he developed a deep love for Chicago sports and deep dish pizza. In his free time, Kevin dabbles in photography and distance running.