Features:

Introducing Clarify

An Open Source Elections-Data URL Locator and Parser from OpenElections

State election results are like snowflakes: each state—often each county—produces its own special website to share the vote totals. For a project like OpenElections, that involves having to find results data and figuring out how to extract it. In many cases, that means scraping.

But in our research into how election results are stored, we found that a handful of sites used a common vendor: Clarity Elections, which is owned by SOE Software. States that use Clarity generally share a common look and features, including statewide summary results, voter turnout statistics, and a page linking to county-specific results.

The good news is that Clarity sites also include a “Reports” tab that has structured data downloads in several formats, including XML, XLS, and CSV. The results data are contained in .ZIP files, so they aren’t particularly large or unwieldy. But there’s a catch: the URLs aren’t easily predictable. Here’s a URL for a statewide page:

http://results.enr.clarityelections.com/KY/15261/30235/en/summary.html

The first numeric segment—15261 in this case—uniquely identifies this election, the 2010 primary in Kentucky. But the second numeric segment—30235—represents a subpage, and each county in Kentucky has a different one. Switch over to the page listing the county pages, and you get all the links. Sort of.

The county-specific links, which lead to pages that have structured results files at the precinct level, actually involve redirects, but those secondary numeric segments in the URLs aren’t resolved until we visit them. That means doing a lot of clicking and copying, or scraping. We chose the latter path, although that presents some difficulties as well. Using our time at OpenNews’ New York Code Convening in mid-November, we created a Python library called Clarify that provides access to those URLs containing structured election results data and parses the XML version of it. We’re already using it in OpenElections, and now we’re releasing it for others who work in states that use Clarity software.

Finding the Links

We began with the idea that people interested in pulling out election results from Clarity-powered sites would already know the URL of the main page for a given election, and that represented an election in a jurisdiction. A jurisdiction could be a state, a county, or a city. Clarify assumes that users are starting with the highest-level jurisdiction available and then drilling down.

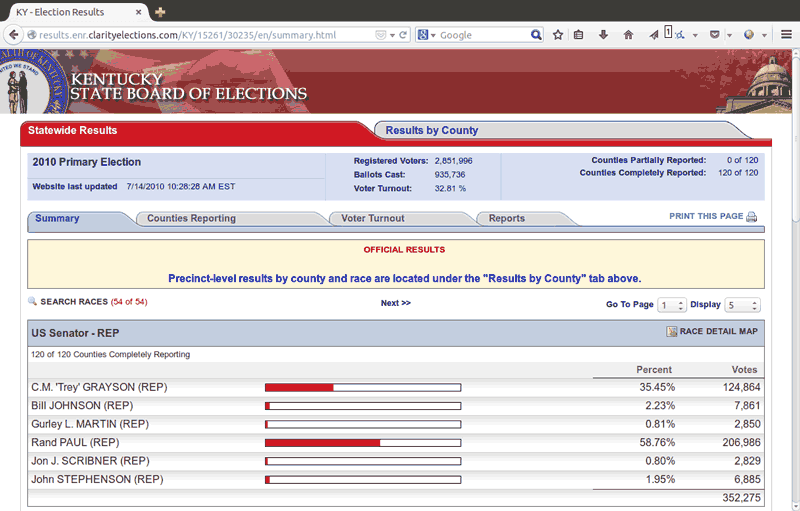

A state’s election summary page

A jurisdiction like the Commonwealth of Kentucky has sub-jurisdictions (counties). Each jurisdiction has structured data that aggregates results for the next level of sub-jurisdiction. For example, for each election, a state has a single file that compiles all of the county-level results. Each county has a file that contains precinct-level results for all precincts in that county. Clarify’s Jurisdiction class applies to any of these jurisdictions, but has a level attribute that specifies whether it is a state, county, or city. By supplying a URL and level, users can create an instance:

from clarify.jurisdiction import Jurisdiction

url = 'http://results.enr.clarityelections.com/KY/15261/30235/en/summary.html'

jurisdiction = Jurisdiction(url=url, level='state')

In addition to providing some details about the results available, including URLs to summary-level structured data in .ZIP files, Jurisdiction objects also provide a way to find the results data for any sub-jurisdictions. This is where we ran into several issues.

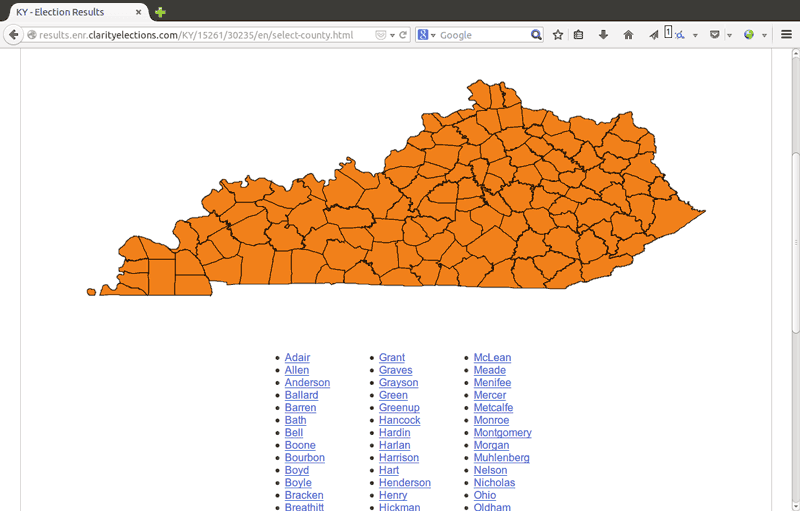

A page that lists counties with available results

Because the county-specific page URLs needed to be scraped, we had to load and parse the page that lists the state’s counties and then follow the redirects for each of the county pages. Following redirects should be simple, if redirects are implemented using HTTP headers, but that’s not the case with the Clarity sites. In fact, we found two different ways Clarity sites handled redirects: one that put the redirect URL in a meta tag and another that used JavaScript.

This is an example of a redirect page that uses a meta tag:

<html><head><META HTTP-EQUIV="Refresh" CONTENT="0; URL=./27401/en/summary.html"></head></html>

And here’s an example of a redirect page that uses JavaScript (HTML indented for legibility):

<html>

<head>

<script src="./129035/js/version.js" type="text/javascript"></script>

<script type="text/javascript">TemplateRedirect("summary.html","./129035", "", "Mobile01");</script>

</head>

</html>

What this means is that we have to request each of the pages that performs the redirect to the county page in order to determine the final county-page URL. For Kentucky, that’s 120 pages, which is a lot of HTTP requests. After initially loading them sequentially, we opted to parallelize the requests using the requests-futures package, reducing the time it takes to retrieve the pages. For scraping the final URL components from the redirect pages, we used lxml library since we were also parsing XML from the results files.

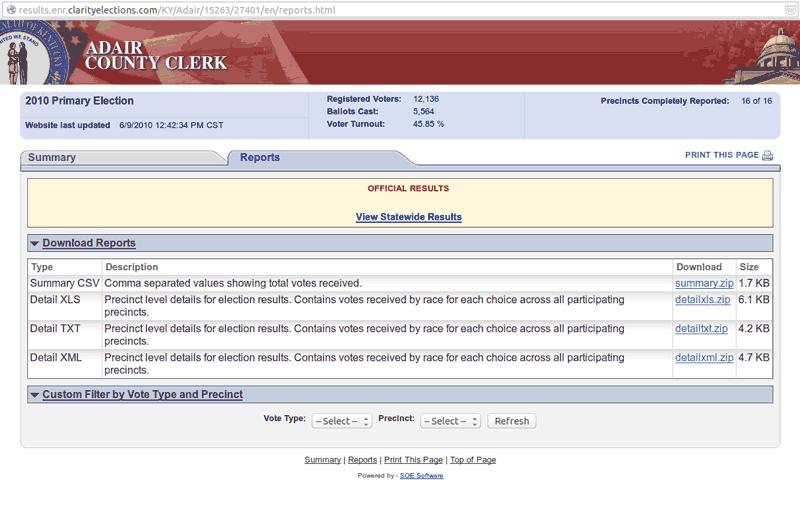

With those URL components in hand, we can now provide direct links to the county-structured data files. This allows users to download and unzip those files (here’s an example URL) and have access to precinct-level data. Clarify currently leaves those tasks up to users; we figured that they would be better suited to choosing how to fetch the .ZIP files and extract the data file contained in them. Once an XML results file is unzipped somewhere, Clarify’s parser is available to load the data into Python objects.

A page that provides links to structured results data. A Jurisdiction instance can provide direct access to the data file URLs.

Parsing Results

It’s worth repeating here that Clarity sites offer structured data in multiple formats, but that Clarify parses only the XML. That’s because it contains the most detailed data and appeared to be the easiest to parse. That’s not to say that a parser for the CSV or XLS files couldn’t be implemented, though.

We designed Clarify’s Parser object to mirror the XML schema as closely as possible, which means that an instance of the class has attributes representing the summary vote totals and individual contests, and the results contained therein. The schema handles both candidate elections and those that have Boolean choices, such as ballot initiatives, by referring to each vote option as a “Choice.” Thus, a candidate for a Senate seat and a “Yes” vote on a referendum are both choices. Here’s how a candidate choice looks:

<Choice key="1" text="Mitch McCONNELL" party="REP" totalVotes="806787">

<VoteType name="Election Day" votes="806787">

<County name="Adair" votes="4900" />

<County name="Allen" votes="4410" />

<County name="Anderson" votes="5164" />

<County name="Ballard" votes="2249" />

<County name="Barren" votes="8268" />

<County name="Bath" votes="1777" />

<County name="Bell" votes="5757" />

....

</Choice>

Clarify’s Parser builds out a list of contests containing both choices and results and also a list of results jurisdictions, which contain summary information about a particular political level (typically county or precinct). Finally, Parser objects also have a list of results for quick access.

Many Elections, One Schema

Most scraping work is a product of necessity, not convenience, but building Clarify had aspects of both: structured data was available, but we needed to scrape web pages to get at it. While Clarify is a relatively simple idea, building it involved more complexity than we expected. Because we had the luxury of focusing on it for two days together, we wrote tests for our code and were able to deal with unexpected issues like the fact that Clarity provides its institutional users with the ability to “skin” its results template using the styles of their own sites.

We also had to design Clarify to easily deal with whatever level of political jurisdiction it encountered, which is why it doesn’t have specific objects for states, counties, and precincts. Those are the most common political levels, but Clarity’s schema is flexible enough to be used both by states for statewide elections and separately by counties and by cities like Rockford, IL. We also made it so that Clarify runs under Python 2.7.x and 3.4.x, thanks to the useful six library.

OpenElections is focused on historical results, not live election-night reporting, but Clarify could be used for news organizations wanting to keep track of results in real time, since Clarity sites typically provide live reporting. If you do use it for that, we’d love to hear about your experience and any pain points you encountered.

Credits

-

Geoff Hing

Geoff Hing

Geoff is a Chicago-based freelance news applications developer and reporter. His reporting interests include criminal justice and immigration.

-

Derek Willis

Derek Willis is a news applications developer at ProPublica, focusing on politics and elections. He previously worked as a developer and reporter at the New York Times, a database editor at The Washington Post, and at the Center for Public Integrity and Congressional Quarterly.