Learning:

Sane Data Updates Are Harder than You Think: Part 3

Third in a three-part series by Adrian Holovaty about hairy data-parsing problems from a journalist’s perspective

An Everyblock timeline view with an updated story

Welcome to part three of the “hairy data-parsing problems” series! This is a three-part series with battle-scarred advice on how to manage data you get from public records and other databases journalists use. Whether you’re using that data to make a publicly visible mashup, or gather data to write a story on it, I hope you’ll find these tips useful.

In part one, I introduced the series and focused on how to crawl a database to detect records that are new or changed (i.e., find records that differ from records you’ve crawled in the past). In part two, I showed some techniques you can use to reconcile data between updates.

In this, part three, I’ll talk about what to do when data changes. Two specific questions I want to answer are:

- When you should highlight a change vs. silently changing it in place?

- What should you do if you make a manual fix to a data set that’s otherwise automatically updated?

Highlighting Changes vs. Making Changes Silently

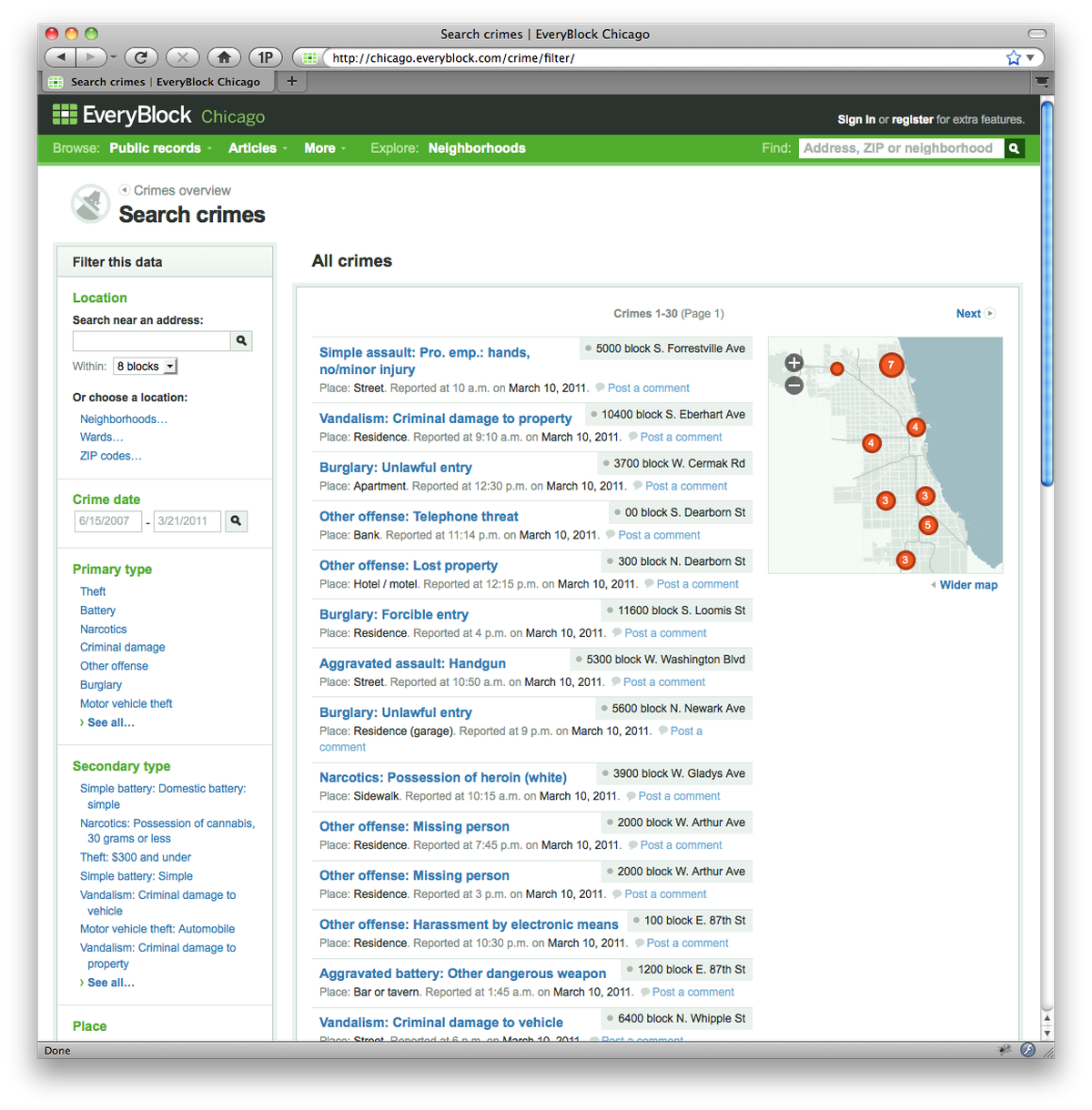

As before in this series, let’s say you run a site that lets people view crime reports by neighborhood, and you get that data digitally from the police every day.

And let’s say you’ve followed the advice from the first two parts of this series. You’re confident your update process is getting the entire data set, and you’re confident you’re reconciling new records with old ones such that you’re not losing data or publishing duplicate records.

A big remaining logistical question for you, then, is: how do you tell your users about changed data?

For example, say your site last week published a crime report of an assault at 123 Main St. But your updater script ran this morning and detected that the police have changed that record’s category from an assault to a homicide. That’s a huge difference, and it may be inappropriate to “silently” change the record in place on your site.

What should you do? It depends on the nature of your site. Some sites are timeline-based, while others are research-based.

If the purpose of your site is to notify city residents of new crimes near them, you should explicitly point out the change to your users. I characterize these types of sites as taking a “timeline-based” approach (as did my old neighborhood-news site EveryBlock). In that case, add a new item in the timeline, along the lines of “Police have recategorized last week’s assault at 123 Main St. as a homicide.” Then remember to update the old item with a link to the update, saying something like, “This assault was later recategorized as a homicide.”

So from the perspective of a user, here’s what she might see on her reverse-chronological timeline:

- Aug. 12: Assault at 123 Main St. from Aug. 7 recategorized as homicide.

- Aug. 8: Robbery, 138 Oak St.

- Aug. 7: Assault, 123 Main St.

If the purpose of your site is more static / research-based, you can get away with the silent data change. Just make it clear that your data is a “snapshot” that was accurate as of when you last updated with your source, and explain that records may change over time.

This distinction of “research-based” vs. “timeline-based” is very important, and it can be tempting to serve both purposes. My advice is: don’t try to do both at the same time!

At EveryBlock, we originally tried to do both—we let people subscribe to timeline updates near them and we let people create custom reports for research. This ended up confusing many people, because in some cases we optimized the data for timelines, and in other cases we optimized for research. We eventually simplified this to focus solely on the timeline. A few researchers were upset about it, but it was the right step for us to take, given our goal of being a daily news site for residents, as opposed to an occasionally used research tool.

Manual Data Fixes vs. Automated Updates

It’s rare for a data set to be 100% perfect; you should expect it to contain errors, regardless of source. As we’ve discussed in this series, it’s important to build a system that can elegantly incorporate data updates automatically—but it’s equally important to handle the case of manual fixes.

Say somebody on your staff finds an error in your crime data—a street name is misspelled, or a crime is miscategorized. You can update it in your local copy, but if your “upstream” data source hasn’t fixed it, your local fix will get overridden next time you run the updater. How do you make sure your local changes don’t get overridden?

A simple solution is to add a boolean column called “is_dirty” to your database. Make it False by default, and set it to True whenever you make a manual change to that record. Then change your updater script to ignore any records that have is_dirty=True. (Obviously, feel free to call the field whatever you want, but the concept of a “dirty” bit of data is well established in the programming world. And, besides, journalists are dirty people.)

Note there’s a chance that this throws the baby out with the bathwater, because it means no changes to that record will ever get made automatically. If your data source eventually corrects the thing that you manually corrected, along with making some more corrections to that record that you hadn’t corrected manually, you wouldn’t get those other corrections.

Thus, a more complicated, but more correct, solution is to display both things: your manual corrections and the latest automatically obtained data. For example, if you manually correct a crime record from “assault” to “aggravated assault,” your site could say, “The police categorized this as an assault, but we’ve manually corrected that to aggravated assault,” hence giving your users both sides of the story, so to speak. Then add a bit of logic to your app that says, “If the data source eventually updates itself to ‘aggravated assault,’ remove the message.”

The End

I hope you’ve gotten something out of this three-part series, whether it’s caused you to make hard-core changes to your own projects or merely got you thinking about some of the hairy problems we deal with in data journalism. Thanks for reading!

People

Credits

-

Adrian Holovaty

Adrian Holovaty has been doing data journalism on the web since 2002. He cocreated Django and founded EveryBlock. Now he’s building Soundslice.