Learning:

Using Big Data to Ask Big Questions

Chase Davis lays down some data science upon us to change how you think about the questions you’re asking of your data

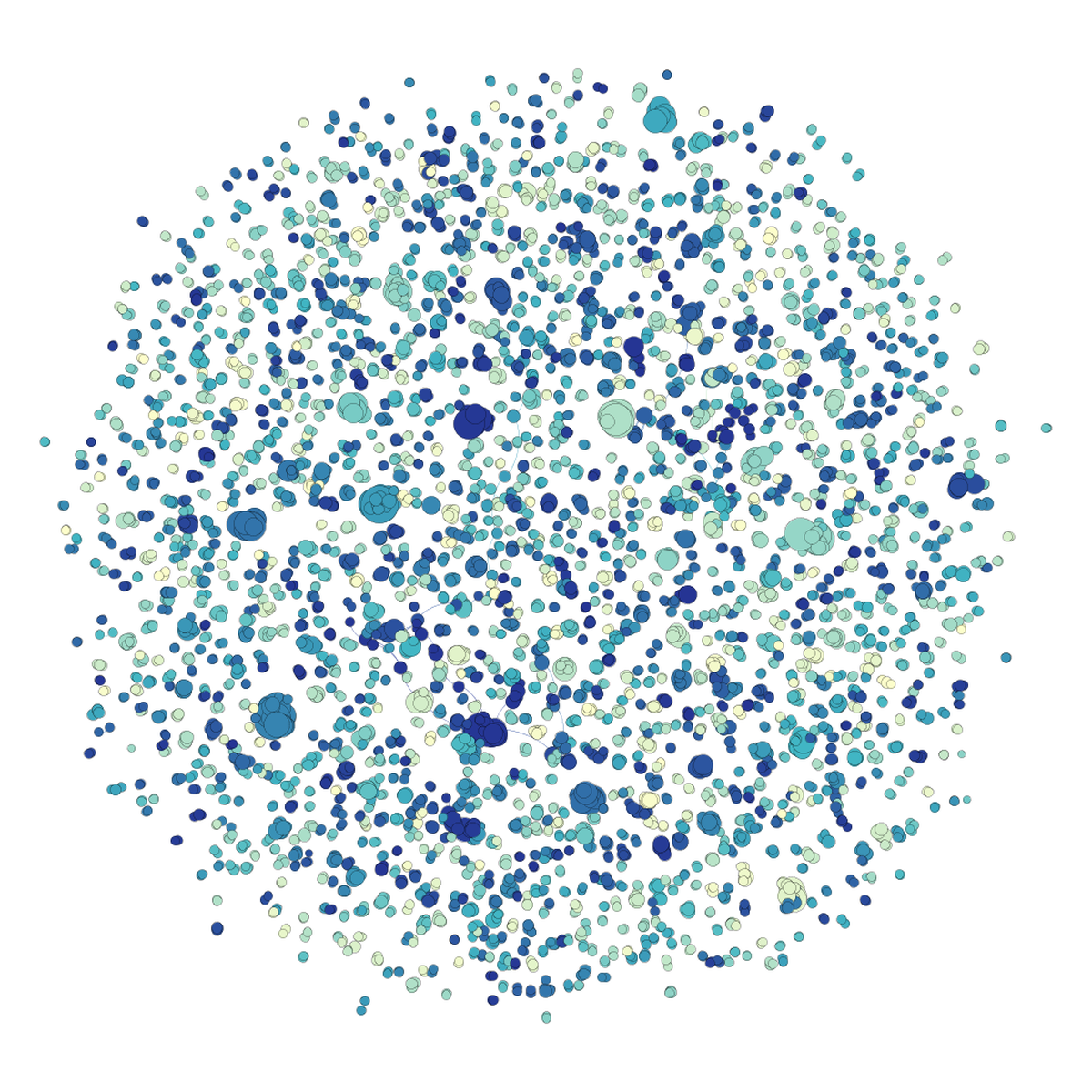

A dot graph of similarities among legislative bills at the state level, courtesy of the awesomeness of data science.

First, let’s dispense with the buzzwords.

Big Data isn’t what you think it is: Every federal campaign contribution over the last 30-plus years amounts to several tens of millions of records. That’s not Big. Neither is a dataset of 50 million Medicare records. Or even 260 gigabytes of files related to offshore tax havens—at least not when Google counts its data in exabytes. No, the stuff we analyze in pursuit of journalism and app-building is downright tiny by comparison.

But you know what? That’s ok. Because while super-smart Silicon Valley PhDs are busy helping Facebook crunch through petabytes of user data, they’re also throwing off intellectual exhaust that we can benefit from in the journalism and civic data communities. Most notably: the ability to ask Big Questions.

Most of us who analyze public data for fun and profit are familiar with small questions. They’re focused, incisive, and often have the kind of black-and-white, definitive answers that end up in news stories: How much money did Barack Obama raise in 2012? Is the murder rate in my town going up or down?

Big Questions, on the other hand, are speculative, exploratory, and systemic. As the name implies, they are also answered at scale: Rather than distilling a small slice of a dataset into a concrete answer, Big Questions look at entire datasets and reveal small questions you wouldn’t have thought to ask.

Can we track individual campaign donor behavior over decades, and what does that tell us about their influence in politics? Which neighborhoods in my city are experiencing spikes in crime this week, and are police changing patrols accordingly?

Or, by way of example, how often do interest groups propose cookie-cutter bills in state legislatures?

Looking at Legislation

Even if you don’t follow politics, you probably won’t be shocked to learn that lawmakers don’t always write their own bills. In fact, interest groups sometimes write them word-for-word.

Sometimes those groups even try to push their bills in multiple states. The conservative American Legislative Exchange Council has gotten some press, but liberal groups, social and business interests, and even sororities and fraternities have done it too.

On its face, something about elected officials signing their names to cookie-cutter bills runs head-first against people’s ideal of deliberative Democracy—hence, it tends to make news. Those can be great stories, but they’re often limited in scope to a particular bill, politician, or interest group. They’re based on small questions.

Data science lets us expand our scope. Rather than focusing on one bill, or one interest group, or one state, why not ask: How many model bills were introduced in all 50 states, period, by anyone, during the last legislative session? No matter what they’re about. No matter who introduced them. No matter where they were introduced.

Now that’s a Big Question. And with some basic data science, it’s not particularly hard to answer—at least at a superficial level.

Analyze All the Things!

Just for kicks, I tried building a system to answer this question earlier this year. It was intended as an example, so I tried to choose methods that would make intuitive sense. But it also makes liberal use of techniques applied often to Big Data analysis: k-means clustering, matrices, graphs, and the like.

If you want to follow along, the code is here.

The input is a list of bill titles introduced in 2013 (in this case from 39 states, not 50), conveniently downloaded from the Sunlight Foundation’s Open States project. In an ideal world you’d also want to get the full bill text, which is a bit harder to track down. But as you’ll see, the titles themselves can be useful too. The output is clusters of bills that are at least nominally similar, if not identical, and were introduced in multiple states.

There are a handful of paths from Point A to Point B, all of which are distinguished from conventional data-journalism-style analysis by one key characteristic: Rather than relying on a specific question, or a heuristic, to trim down the universe of data being analyzed, they start with no assumptions. They ANALYZE ALL THE THINGS!

Let’s think about the simplest way we might approach our question about copycat bills. First, start with a single bill in a single state. In order to find copycats, you’d simply need to write a script that searches bill titles across every other state and looks for ones that are similar. Then do the same for a second bill, and a third, until you make it through your entire list. Basically, just compare every bill to every other bill and boom: problem solved.

Except it’s not. Even if you’re only looking at 10,000 bill titles, that would require around 50 million individual comparisons—doable on a modern computer, but definitely not scalable. A slightly larger universe of 100,000 bills, for instance, would require nearly 5 billion comparisons. You’ll be waiting on that until the sun burns out.

One solution would be to borrow a page from Google and throw computing power at the problem, using techniques like MapReduce and distributed processing. I’ve written about that elsewhere if you want to see how it works, but in this case it’s also like swatting flies with pickup trucks. Total overkill. A slightly more clever approach can find our matches with a lot less muscle, and without sacrificing the simple intuition of our naive approach.

Block All the Things!

Clearly a bill honoring Tim Tebow is entirely different than, say, a bill about water rights in the Mojave desert. So why bother comparing them at all if there’s absolutely no chance they’ll match?

It stands to reason that we could partition our list of bills into buckets containing items that are at least sort of similar. That way, rather than comparing every bill against every other bill, we can just compare bills that are in the same buckets. This takes a lot less muscle. No compu-steroids required.

In data science terms, what we want to do is separate the data into blocks, or clusters. Conveniently, there are algorithms for this: notably k-means clustering, which allows you to specify the number of clusters you want to find and then quickly groups all your bills accordingly.

We do a little preprocessing magic beforehand, mainly splitting up bill titles into words and weighting them based on a measure known as term frequency-inverse document frequency. Tf-idf, as it’s known, gives large weights to words that are rare and important, and smaller weights to words like “the” that tend to appear frequently.

Once that’s done, all of our titles will be represented in what is known as vector space—basically a giant matrix with rows representing documents, columns representing words, and cells that are filled with weights. If you’re visually inclined, you can picture each row in the matrix as a point on an n-dimensional plane. Similar documents will naturally show up as points that are closer together.

K-means clustering runs through that plane and carves it into pieces, with each containing some selection of similar bills. From here, we can run our pairwise comparisons again (in this case, using a measure known as cosine distance) and put bills that meet some threshold of similarity off to the side for further review.

The Results

To make exploration a little easier, my code represents similar bills in graph space, shown at the top of this article. Each dot (known as a node) represents a bill. And a line connecting two bills (known as an edge) means they were sufficiently similar, according to my criteria (a cosine similarity of 0.75 or above). Thrown into a visualization software like Gephi, it’s easy to click around the clusters and see what pops out. So what do we find?

There are 375 clusters in total. Because of the limitations of our data, many of them represent vague, subject-specific bills that just happen to have similar titles even though the legislation itself is probably very different (think things like “Budget Bill” and “Campaign Finance Reform”). This is where having full bill text would come handy.

But mixed in with those bills are a handful of interesting nuggets. Several bills that appear to be modeled after legislation by the National Conference of Insurance Legislators appear in multiple states, among them: a bill related to limited lines travel insurance; another related to unclaimed insurance benefits; and one related to certificates of insurance.

Bills with names like “Firearm Protection Act” seem to be popular, having been introduced in at least four states (and probably more). Those bills would seem to represent pushback against efforts to curtail the purchase of semiautomatic weapons and large-capacity magazines. A little Googling shows that similar language shows up in several of them.

Likewise, a number of laws from the national Uniform Law Commission are making the rounds, as are interstate compacts on subjects like electing the president purely by popular vote. At least seven states introduced various resolutions honoring the Delta Sigma Theta sorority, which apparently turned 100 this year.

This kind of analysis is exploratory in nature. It’s about using algorithms to cut out the 98 percent of data that doesn’t matter so you can focus on the 2 percent that does. This kind of analysis would never support a story or app on its own, but it would provide a starting point that could be fleshed out through boots-on-the-ground reporting and research.

Wrapping Up

There are undoubtedly more elegant ways to solve the title similarity problem, for instance using techniques like locality-sensitive hashing, which basically does the same thing, only faster. But it’s a bit more magical and the intuition is harder to explain.

What’s clear is that the approach involves techniques—and a mindset—that is still relatively new to open data and data journalism. Rather than relying on databases and queries, it relies on algorithms. Code efficiency and memory management actually matter because the analysis is happening at scale. It’s exploratory, more a conversation than an interrogation. And it lets us answer a Big Question, rather than smaller specific ones.

Traditional data journalism techniques are great at finding needles in haystacks, but for that to work you have to have some idea what you’re looking for. In this case, we’re not looking for a single needle in a single haystack—we want to take the entire barn, throw out the 95 percent of hay that doesn’t contain any needles, and leave ourselves with small, needle-filled piles to sort through by hand.

Both approaches have their strengths, and they can work very well together. But the data science approach is still relatively new to us. Every day, people outside journalism are coming up with new and creative ways of looking at complex datasets, and there’s nothing stopping us from using those same techniques for our own benefit.

So make friends with data scientists. And learn math. You’ll see data with a new perspective, ask Big Questions, and no doubt develop new stories and apps that would never otherwise have been possible.

People

Credits

-

Chase Davis

Chase Davis

Chase Davis is a senior digital editor at the Star Tribune in his hometown of Minneapolis. He previously worked as the editor of the Interactive News desk at The New York Times.