Features:

What We Learned from Staring at Social Media Data for a Year

BuzzFeed News explored how filter bubbles skew our views, how automation sways social dynamics, and other dystopian things

Displays of BuzzFeed Open Lab fellow Lam Thuy Vo’s work on social media data for their End of the Year Showcase. (Lam Thuy Vo)

It’s become crucial for reporters to gain a better grasp of the social web. Recent revelations around Russian interference with the 2016 presidential elections and Donald Trump’s Twitter-heavy way of governing the U.S. show that there’s an ever-growing need to develop literacy around the usefulness and pitfalls of social media data.

Our lives online are no longer just mere extensions of the happenings in our lives in the “real world.” We make connections online—good ones and bad—and have experiences online that feel real and formative. We build our world views based on information we are exposed to online. Our umwelt has expanded into the virtual realm.

Now, we also understand, the social web has increasingly become a space where economically and politically motivated actors game virality for personal gain.

During the BuzzFeed Open Lab fellowship, which wrapped last week, I wanted to investigate this space with code. I wanted to find programmatic ways to explore social media data and develop a deeper understanding of how it can help—and potentially obfuscate—stories that journalists pursue. Below are some of the story archetypes and issues I’ve discovered along the way.

A Megaphone for Politicians and Everyday People

Privacy lives on a scale rather than a binary—what we consider private and public changes with each audience. What I share with my best friend is different from what I share with my mother, which is different from what I share with colleagues, students, or neighbors.

Online, this scale is distorted. This results in online behavior that can be both deeply personal and performative at the same time. Everything we post for more than one person—on private or public forums, our timelines, our Twitter account—is sort of public, yet directed towards a semi-personal audience, who may or may not listen.

For public figures and everyday people alike, social media has become a way to address the public in a direct manner. Status updates, tweets, and posts can serve as ways to bypass older projection mechanisms like the news media or press releases. And so what we do online—or who we say we are online—should always be taken with a grain of salt. It’s what we project to be, perhaps an imagination of our ideal self.

For politicians, however, these public announcements—these projections of their selves—may become binding statements, and in the case of powerful political figures may become harbingers for policies that have yet to be put in place.

Because a politician’s job is partially to be public-facing, researching a politician’s social media accounts can help us better understand their ideological mindset. For one story, my colleague Charlie Warzel and I collected and analyzed more than 20,000 of Donald Trump’s tweets to answer the following question: what kind of information does he disseminate and how can this information serve as a proxy for the kind of information he may consume?

A snapshot of the media links that Trump tweeted during his presidential campaign

Social data points are not a full image of who we actually are, in part due to its performative nature and in part because these data sets are incomplete and so open to individual interpretation. But they can help as complements: President Trump’s affiliation with Breitbart online, as shown above, was an early indicator for his strong ties to Steve Bannon in real life. His retweeting of smaller conservative blogs like theconservativetreehouse.com and newsninja2012.com perhaps hinted at his distrust of “mainstream media.”

The lesson here is that public statements on social media are interesting indicators of how people project themselves to others, especially for public figures, but should be complementary with other reporting and interpreted with caution.

A Stage for (Emotional) Experiences

Many of our interactions are moving exclusively onto online platforms. As our lives become more tethered to online platforms, we become more vulnerable and emotionally attached to what happens on them.

In other words, what happens to us on the internet does matter, regardless of how it may or may not manifest itself in the real world. Social behavior online often mirrors social behavior offline—except that online, human beings are assisted by powerful tools.

Take bullying, for instance. Bullying has arguably existed as long as humankind. But now bullies are assisted by thousands of other bullies that can be called upon in the blink of an eye. Bullies have access to search engines and digital traces of a person’s life, sometimes going as far back as that person’s online personas go. And they have the means of amplification—one bully shouting from across the hallway is not nearly as deafening as thousands of them coming atcha all at the same time.

Such is the nature of trolling.

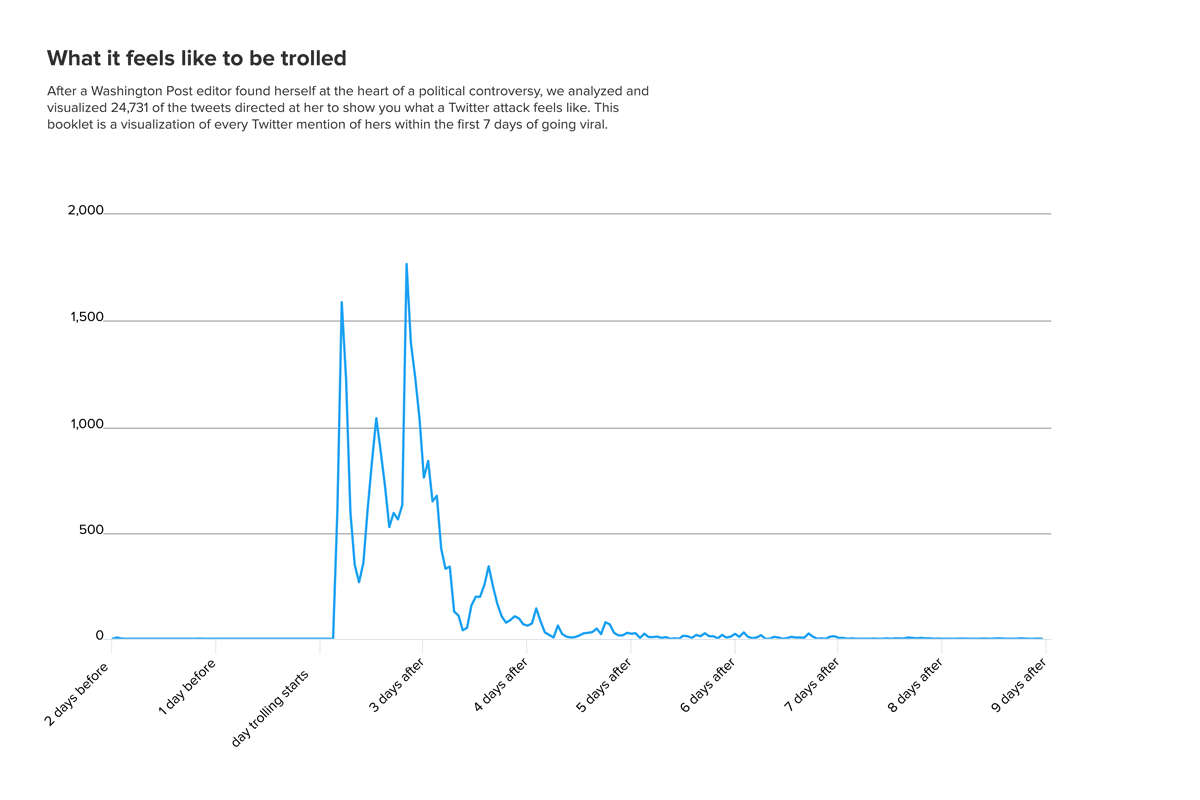

Washington Post editor Doris Truong, for instance, found herself at the heart of a political controversy online. Over the course of a few days, trolls (and a good number of people defending her) directed 24,731 Twitter mentions at her. Being pummeled with vitriol on the internet can only be ignored for so long before it takes some kind of emotional toll.

A chart of Doris Truong’s Twitter mentions starting the day of the attack

Social Dynamics and How to Game Them

We are also starting to see the emergence of social structures—the formation of “in-crowds” and “out-crowds.” Internet culture is optimized and visually designed to encourage quick and emotional sharing, not thoughtful, nuanced discussions. This means that people are encouraged to jump into the fray based on whatever outrage/joy they feel. There’s little incentive online to slow down, to read beyond headlines, and to take the time to digest before we join our respective ideological crowds in cheering on or expressing our discontent with a certain issue.

Human beings are tribal at their core. It’s easy to follow the urge to fall into groups that affirm our views. Algorithms only steer us further into those corners.

For one story, we analyzed the Facebook newsfeeds of a conservative mother and her liberal daughter. We found that not only were the groups and pages they followed completely different…

… but the friends who showed up on their feeds also differed entirely:

Both mother and daughter were surprised at just how different their newsfeeds were, given that they both grew up in the same places, love and care about many of the same people, and share a lot of values outside of the realm of politics. They found that their experience online was more divisive than their face-to-face encounters were.

Automation, Bots & Cyborgs

Social platforms play to our adherence to “our tribes” and has us gathering in large groups around specific subjects. Where there’s a lot of eyeballs there’s money to be made and influence to be wielded. Enter the new players in this realm: (semi-)automated accounts like bots and cyborgs.

Bots are not evil from the get-go: there are plenty of bots that may delight us with their whimsical haikus or self-care tips. But as Atlantic Council fellow Ben Nimmo, who has researched bot armies for years, told me for one story: “[Bots] have the potential to seriously distort any debate […] They can make a group of six people look like a group of 46,000 people.”

The social media platforms themselves are at a pivotal point in their existence where they have to recognize their responsibility in defining and clamping down on what they may deem a “problematic bot.” In the meantime, journalists should recognize the ever-growing presence of non-humans and their power online.

A graphic that compares tweets from a human to those from a bot. More on how to spot a bot can be found here.

Moving Forward

Many phenomena—from trolling to online tribalism—had previously been observed before this election, but were often painted as events that happened on the fringes of the web. Now people are talking about how misinformation campaigns may imperil elections worldwide; about how filter bubbles could be skewing billions of people’s points of view; and about how online hate campaigns may impact just about anyone.

With this in mind it seems imperative to better understand the universe of social data. This includes developing a data literacy just around the social web. Some of the main takeaways from this research may be broadly structured as follows:

Issues surrounding problematic automation: Not every user is a person; there are automated accounts (bots) and accounts that are semi-automated and semi-human controlled (cyborgs). As mentioned above, not every bot or cyborg is bad, but those that are deployed en masse may be used to skew how we should view political issues or events. Ever-more important tasks include: understanding what kind of user behavior indicates automation, what motivations lie behind their creation, and the scope of their capabilities.

The tyranny of the loudest: Not everything or everyone’s behavior is measured. A vast amount of people choose to remain silent. An analysis of a video live stream of one of President Trump’s speeches revealed that only 2–3 percent of those viewing the stream chose to comment or react to it. 97 percent were silent.

Emotional responses to a Fox News and Fusion live stream.

What this means is that the content that Facebook, Twitter and other platforms algorithmically surface on our social feeds is often based on the likes, retweets and comments of those who chose to chime in. Those who did not speak up are disproportionately drowned out in this process. Therefore, we need to be as mindful of what is not measured as we are understand what conclusions we can draw from the limited social data of what is measured.

We are the product, hence our data is not ours: Our data is what makes Facebook, Twitter and other social media platforms valuable. Every click and like, even our searches and posts, contribute to a massive corpus of data that is more valuable than any marketing research could ever be. It’s longitudinal data about our behavior and, more importantly, indicators of how we feel about issues, people, and—dare I say it—products. By using social platforms, we opt into giving them power over our data, and they decide how much access we get to it. In many cases that’s very little. This means that we have to find ways to better that is provided by these companies. It also means journalists may need to be more creative in how they report and tell these stories—journalists may want to buy bots to better understand how they act online, or reporters may want to purchase Facebook ads to get a better understanding of how Facebook works. Whatever the means, operating within and outside of the confines set by social media companies will be a major challenge for journalists as they are navigating this ever-changing cyber environment.

I will continue to explore the social web beyond this fellowship here at BuzzFeed News as a reporter. My research will continue to be updated here, and I’m in the process of writing an instructional coding book to teach novices how to navigate data mining on social media platforms. Please feel free to get in touch.

Organizations

Credits

-

Lam Thuy Vo

Lam Thuy Vo

Lam Thuy Vo is a senior reporter at BuzzFeed News. You can follow her work on Twitter @lamthuyvo.