Features:

John Keefe on leading a news development team

The head of WNYC’s Data Team sits down with Source

As part of our one-year anniversary series, we’re interviewing news apps and interactive features editors about the history of their teams, the challenges they face, and their advice to designers and developers who are interested in newsroom work.

John Keefe (Image courtesy WNYC)

WNYC’s John Keefe comes to coding from the newsroom side and leads one of the most nimble teams in the field. He spoke with Erin Kissane about his own path to programming, the process of building a team from scratch, and the story behind some of his team’s most well known apps and features. (Jennifer Houlihan Roussel, WNYC’s senior director of publicity, kindly sat in with us and made occasional contributions.)

This interview has been edited for length and clarity.

How the team got started

First of all, could you walk us through what your path was to creating the data team and making news apps?

About three years ago, I was the news director leading the WNYC newsroom. We were growing as an organization and starting to do a lot more stuff with breaking news, with elections, and really trying to hold our own. I started seeing what the folks at the New York Times, the LA Times, the Chicago Tribune (Brian Boyer was at the Chicago Tribune at that point), ProPublica—mainly those four organizations—what they were doing, and saying, “Whoa.” I’ve been a fan of data journalism for a long time. And here it was, front and center. It wasn’t just “behind the story”—it was searchable, scannable. You could surface it.

I thought that a public radio audience would eat this stuff up. We could do it. There had to be a way for us to do this.

I did a couple of little experiments here and there, but I was the news director—I didn’t have enough of a sense of what needed to be done or how to do it.

So what I did—and I attribute this to the fact that my kids were moving out of toddler stage, so I ended up with a little bit of extra time on nights and even on the weekends—I decided I could start to learn some of this. I could learn from what was out there. And in this beautiful tradition that’s kept going, Brian Boyer, Ben Welsh, Scott Klein, and Chris Groskopf were all starting to blog about all this stuff: “Here’s how we did it.”

Right about this time, one of our reporters, Arun Venugopal, mentioned that the US census data was going to be coming out. That’s the 2010 data, which was released in 2011. He said, “Wouldn’t it be cool to have our own census maps? And I said, “Yeah, The New York Times is going to do a great job with that.” He countered, “Yeah, but what if we had ‘em?”

So I started digging into it. It was tricky. Shapefiles? What’s a shapefile? How do you do this? I started to try to figure it out. Just about that time, I was at the NICAR conference, and NICAR was starting to release their reprocessing census data, making it easier. Joe Germuska from the Chicago Tribune showed how to do it. Everybody was just willing to share.

And sure enough, partly because of an issue at the census bureau, New York was in the last batch of census data to be released—so they were releasing a few states every week, and I was learning and learning and learning, as fast as I could. Then they released it and we were ready for it. It came, we ran the numbers and made the maps and could see where the stories were.

We could see the African-American population drop in Harlem. We could see the Hispanic population drop in Spanish Harlem. We could see where these patterns in different parts of the city were emerging. And reporters went—right away, that day—to those communities. A few hours later, we had census maps showing racial change, population change, ethnicity change. And the Times didn’t. They ran their maps that weekend. We had them the day that the data came out. They weren’t perfect. But we did it.

Then, I happened to be browsing the New York City data mine for hurricane-related stuff—just because, why not? And I saw there was a shapefile. And I now knew how to deal with shapefiles. It was a shapefile of the hurricane evacuation zones for New York City.

I’d actually seen this map printed. At one time at the previous WNYC building, I had the engineers print out that PDF, on a blueprint paper, like a plotter. I wanted it because if there’s ever a hurricane, we should know where the reporters should go, where the damage is going to be. (I wish I still had that thing. That would have been perfect. But I think I lost it in the move.) But then I realized that I bet that’s the shapefile they used to make that PDF that’s completely unusable online. What if I made that as a Google map that you could put your address in?

So I made it. It was a fun little exercise. I saw that, “Can I do this? Yeah. Wow. All right, find open data, put it in, Google, searchable, boom.”

But you didn’t publish it then?

No. I showed it to our other emergency-minded reporter producer in the newsroom, Brigid Bergin, and she said, “Wow, that’s cool. That’s going to be really useful someday.” Fast forward six weeks, I’m listening to our broadcast in the morning: “Hurricane Irene might be coming this way.” And, I have a map for that.

On the subway on the way to work that morning, I put the key on, because I hadn’t done that—I hadn’t put the color key on. I packaged it together. We put it online and it got a little bit of traffic. The next morning, the mayor announced the evacuation of Zone A.

Two things happened: First, the traffic to our map just went up, up, up, and up. We had a new IT guy. He said, “You guys are getting a lot of traffic on that thing. How much do you think you’re going to get?” I didn’t know. I told him maybe three times what we had. We’d never had that much traffic. He suggested we put it on the Amazon Cloud so it wouldn’t crash.

So he put it on the Amazon Cloud. Simultaneous to this, the city’s map crashed. We were the only ones out there with a map of the evacuation zones. The PDF was out there, but that thing is unwieldy. You can’t search. It’s impossible to use.

We ended up getting something like 21 times the traffic I estimated. And over the next few days, that thing got more traffic than any one thing—any one object ever on the website.

And it stayed up?

It stayed up the whole time. It was hosted on Amazon and with Google Maps.

This map, it happens to do this incredible public service and gets a crazy amount of traffic. It gets embedded everywhere. So that’s kind of the genesis of me not being News Director. That’s really how it ended up taking off. It was this odd mix of tinkering, playing, serendipity, timing, preparedness.

How the team took shape

WNYC seems like a really astonishingly risk-tolerant environment.

I think that’s really key especially now in the current iteration of the Data News team—it permeates everything we do. We don’t want to promise anything we can’t get done, but risk was part of the deal. Prior to building this team, the only programmers here were the ones who were working in the core infrastructure of the operation and making sure the website and audio system were running.

That team just wasn’t designed to work on what I call “news time.” To their credit, they would say, “OK, we’ll make this thing, and then the next time that happens we’ll be prepared for it.” And sometimes that works, and sometimes it doesn’t—sometimes the news is different. So I had to think about what we could do, what we could turn around quickly, on news time. I had to find out how can we use the tools that are out there.

That’s really how it got going. I did this tinkering, there was some stuff that I could build using Google Fusion tables and other things. I started teaching myself how to code, so I could build things, and learning JavaScript—Googling every command. I think I’m a better programmer now, but certainly didn’t know exactly what I was doing.

The station said, “We like what you’re doing. Let’s figure out a way to help you.” For a period of time we contracted on a retainer basis with a small group called Balance Media, which is three people who sort of were our team. We couldn’t do work necessarily on news time, but in a couple weeks, we could put something together, and we did some pretty neat things with them. And then, slowly we started building up our team.

How did you decide how your hiring arc was going to go—who you were going to bring in first, what kinds of skills you needed?

What I was doing by myself for a while was figuring out what I thought would be a good story to tell. And then I’d have to figure out how to make it. I was Googling JavaScript commands into the wee hours of the morning. Then, I was trying to have some sense of minimalist design: “Do one thing well, make it easy to use, make it user-friendly.” Those were the three components:

- The editorial vision.

- The building—not so much backend code, mostly figuring out how something will work and function from the front end.

- Trying to make it not look ugly.

For the longest time it was myself as the editor and Steve Melendez as the coder, who did back-end stuff and is a really great JavaScript coder. And then Louise Ma was the designer. The three of us were for a long time a little troika. We were all supporting each other to get something out the door. It was a team effort.

We’ve added two folks since then and Steve left the team. Jenny Ye was our intern last year, and she’s now a producer. Mainly her role is to help with other components of this work: How do we get the data? Do we have to negotiate with somebody for the data? Doing some of that hunting. She’s sort of the pinch hitter for everything: blogging, we have a Tumblr.

It turns out that it was hard for me to keep juggling that kind of stuff and be a part of the editorial process. Having Jenny work on that has been really great. She also can code, so she can jump in and she fixes things when they’re broken—the care and feeding of the existing things, and the exploration and construction of future things is really key.

The next person we’ve hired is Coulter Jones. He comes to us from the Center for Investigative Reporting. And he is a sort of old school—is there is such a thing?—data journalist. He used to work in the IRE library helping journalists around the country work with datasets.

We have a product, a site called SchoolBook, which has all the NYC public schools’ information and needs to be updated. It normalizes all of this crazy data from the Department of Education and other sources to put things on an easy scale. It’s run by this really big back-end database and a bunch of code. We used to do it with the New York Times—we do it all ourselves now, so we needed somebody to maintain that.

Coulter’s a little bit more specialized, but it’s really about having a data journalist on the team. He also supplements another investigative reporter, Robert Lewis, who was just recently hired in the newsroom. Robert is also really good with data.

We have openings for a JavaScript front-end coder and a senior producer, and we hope to also hire someone who’s more focused on back-end systems.

And that’s sort of the next level. There’s a one-person shop. There’s the editor, designer, coder team, and then there’s this next level.

But actually—and maybe I’ll regret saying this—I don’t want to get much bigger than that. I feel like we’re a lean little team. We’re a really generous size for our organization. We can do a lot of good work with that size team and I think that’s where we’ll probably stick.

Experimenting at hack days

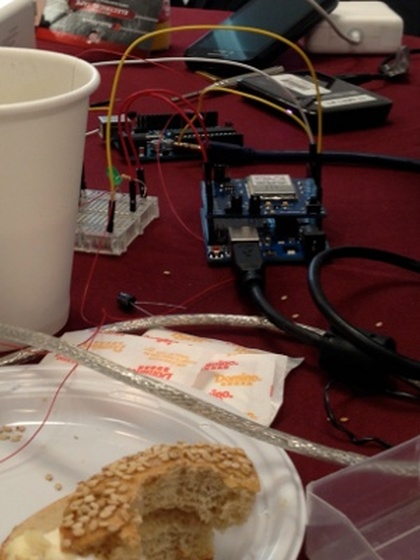

Fuel and hardware from the cicada project hack day

I wanted to specifically ask about the in-house hack days, which you’ve done a couple of now?

There was one big one. There have been a lot of internal brainstorming sessions. The crazy thing about the hack days that we’ve participated in is that they’ve led to very real things. I’ve been to so many hackathons where you leave thinking, “Wow, that was cool, that was neat,” and then, you never hear about any of that again. And, in some ways, that’s not a problem. I learned so much and made so many contacts at other hack days. Six months later, I use that thing I learned or remember I met someone who does something we want to do. They’re beneficial.

But I have been really surprised at how hack day to product has happened. The cicada project is a great example of that. It ended up kind of a crazy success. But it’s not the only one.

Another example is that our team, and some other folks from the web digital team, went to The New York Times for the Sandy coastal memories map. And we used it again: Map Your Favorite Splash Spots.

That came out of a hackathon and we used it right away. I’m a big fan of that.

Lessons learned

One final thing—if you had one piece of advice for someone starting one of these teams or starting as a team of one, what would it be?

Make stuff. Really. Matt Waite has said for years, “Show don’t tell. Just do it.” And I think that’s true for coding. I think it’s true for learning to code. Just make it. Think of what you want to make and make it. The process of figuring out how to do it is how you learn. Read what other people have written, Google it, Stack Overflow, whatever it takes.

It doesn’t even have to be stuff that you actually are going to put on your company’s website, but put it online. Show it to your friends. Show it to your mom. Do something. Make something that you can share.

The trick that we did—it wasn’t a trick, but it was helpful—is that I figured out a way to share what I made. We iFramed it into a web article. The whole hurdle of having to know how your CMS works or messing with any of the code or taking down your company’s site, you don’t have to get into any of that. An iFrame is just a window that’s serving up another page. The worst thing that can happen in is that that window is blank. That’s embarrassing, but at least it’s not taking down the entire site. The other benefit of it is that all of the advertising, the tracking, the load balancing—everything that your brilliant digital or IT department has worked out so that stuff works and makes money and can be tracked—applies, because it’s all there and you’ve just iFramed into an existing thing.

That turned out to be really beneficial because no one was nervous about me taking down the site. The worst thing that could happen was my thing wasn’t working, it would look bad, and I would have to fix it. But that was small potatoes compared to what else was possible. The upside was that when it did work well, it was very much there.

Then the last thing I would say is put tracking code on what you make, so you can measure your success. We put Google and ChartBeat tracking code into every project. And when it gets embedded, we can know how many people see it even on other sites.

We have a tradition of allowing anybody to embed projects, if the data we have is licensed in a way that we can share it—which 99 percent of the time it is—we can actually see how much traffic a thing is getting even if it’s on Yahoo! or Salon.

Those are the two: make stuff and put your own tracking code on it. If it’s good enough for publication, iFrame it in. Everything grew from there.

Credits

-

John Keefe

John Keefe is the Senior Editor on the Data News Team at WNYC, which helps infuse the station’s journalism with data reporting, maps and sensor projects. Previously Keefe was WNYC’s news director for nine years. He’s on the board of the Online News Association and is an advisor to CensusReporter.org. Keefe tweets at @jkeefe and blogs at johnkeefe.net and datanews.wnyc.org.

-

Erin Kissane

Erin Kissane

Editor, Source, 2012-2018.