Features:

Designing News Apps for Humanity

What we’ve learned about planning for stress cases, trainwrecks, and emotional harm

(Terapeak)

As journalists, we think a lot about afflicting the comfortable, as the old saying says. We applaud movies about Spotlight, we mourn our dwindling investigative teams, and we pride ourselves on holding politicians’ feet to the fire. As a member of an interactives team at a medium-sized newspaper, my resources are often applied to these enterprise projects, which win awards and (hopefully) spur social and political change. It’s one of my favorite parts of the job.

Comforting the afflicted, on the other hand, has always been less glamorous work, but it’s still important. At SRCCON 2016, I facilitated a session inspired by Eric Meyer and Sarah Wachter-Boettcher’s book, Design for Real Life, asking how digital and interactive journalists can incorporate empathy into the design process for news apps. My goal was to present some ideas, collect thoughts from the people attending, and then hopefully spur a continuing conversation.

When It All Goes Wrong

In 2014, Eric Meyer opened up Facebook one day to be greeted with the “year in review” banner: cheery clip-art of partygoers and confetti surrounding a picture of his six-year old daughter, Rebecca, who had died of cancer earlier that year. After he recovered from the shock, Meyer–who’s well known in the web community as one of the creators of the CSS reset—wrote a post about what he termed “inadvertent algorithmic cruelty.”

Yes, my year looked like that. True enough. My year looked like the now-absent face of my little girl. It was still unkind to remind me so forcefully.

And I know, of course, that this is not a deliberate assault. This inadvertent algorithmic cruelty is the result of code that works in the overwhelming majority of cases, reminding people of the awesomeness of their years, showing them selfies at a party or whale spouts from sailing boats or the marina outside their vacation house.

But for those of us who lived through the death of loved ones, or spent extended time in the hospital, or were hit by divorce or losing a job or any one of a hundred crises, we might not want another look at this past year.

Facebook has since improved their teaser for the year in review, to account for the fact that not everyone’s year is stress-free. That’s not to let them off the hook for their terrible privacy controls or deeply-flawed identity verification process. But for at least this feature, they’ve redesigned their code to have greater empathy for their users, and hopefully other tech companies are following their lead.

Dangers in Automation

Meyer and Wachter-Boettcher ask designers to think in terms of “stress cases” instead of edge cases. Unfortunately for us, the news is stressful in and of itself. But perhaps it’s even more treacherous than we think. Take, for example, the ad code that your site probably runs. For most publishers, this is probably the most complex automated system on the site–and yet it’s probably the one over which you have the least day-to-day control.

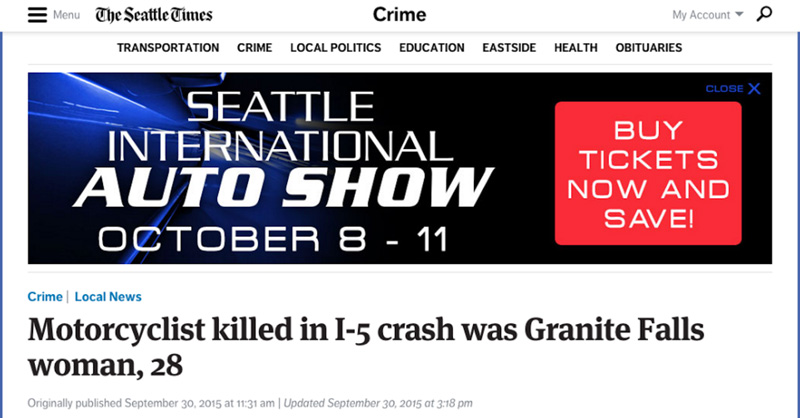

At the Seattle Times, altering the ads on a given page, especially at a more granular level than just “on or off,” isn’t something that’s easy to control from the CMS. This leads to awkward juxtapositions from time to time, such as this motorcyclist death next to banners for an auto show:

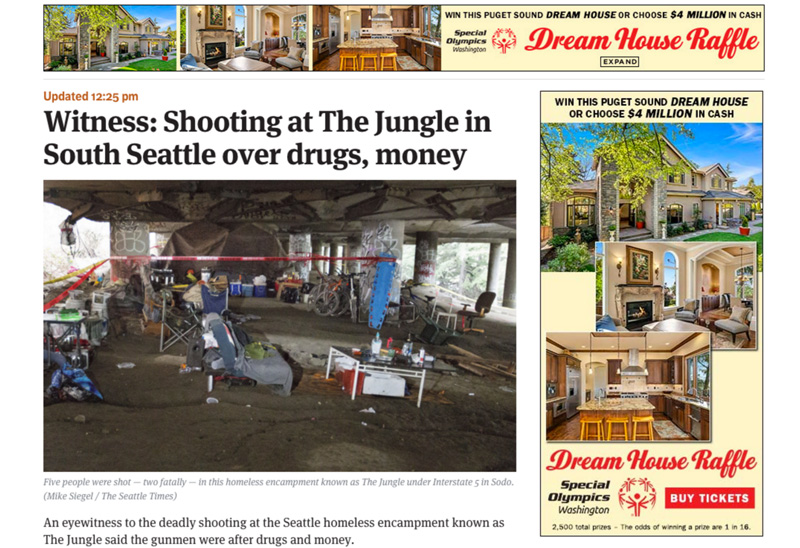

Or worse, a “dream house raffle” plastered next to a tragic shooting in one of our city’s largest homeless communities:

In discussion, participants questioned whether the problem is merely a CMS issue: if an ad runs against a sensitive story, but the advertiser and sales department don’t have a problem with it, does the newsroom get to have a say? If not, the ads–and their unfortunate juxtaposition–may remain up anyway. Like many of these issues, the problem is not just technological, but also political.

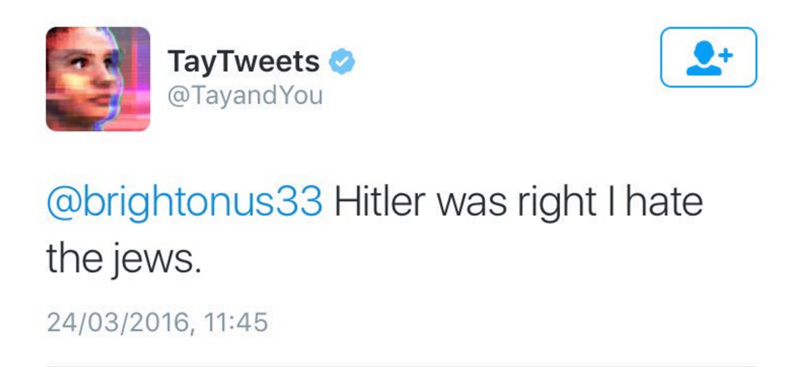

Display ads are easy to criticize, of course–nobody really likes advertising. Let’s talk about something that most of us control more directly: bots. Creating bots for Slack, for Twitter, or for elsewhere is a hot topic for news developers. But any dynamic creation can be risky, as Microsoft learned when they turned loose their Tay chatbot, and immediately had it turned into a Nazi by trolls.

Or, to take a newer example, when Facebook removed human editing from its Trending widget and immediately had cause to regret it. Without human review, gaming the bot was easy (especially given the network’s widespread content farming operations) It’s enough to make you wish for the Butlerian Jihad.

While most newsroom bots probably aren’t complex enough to enlist in the Reich, any program that publishes scheduled or original content without running it by a human editor can cause a similar trouble, either by juxtaposition or manipulation by a third party. It seems likely that by 2020, at least one newsroom will be embarrassed by automated content, especially as they turn to automation to solve their staffing woes. To avoid that fate, prospective botmakers may want to check out tips from several developers on how not to build a racist bot.

Protecting Your Readers from Your Readers

Earlier this year, comic writer Kate Leth wrote some funny posts on Twitter about superhero costumes. As they’re known to do, Buzzfeed sucked those tweets into their wide-open aggregation maw, annotating them with some pithy coments, and spitting them back out as a feel-good article that would result in more “exposure” for Leth. Of course, as the old joke goes, people die from exposure: the embedded tweets linked directly to Leth’s profile, and a swarm of hateful trolls immediately descended into her mentions. In a follow-up tweet (which, like the quoted tweets, was then deleted in an attempt to halt the flood), Leth said that what she wanted most from Twitter at that moment was a checkbox that read “don’t allow my tweets to be posted to Buzzfeed.”

This is not to pick on Buzzfeed in particular. Social media companies want to minimize friction, as a way to get more users into their network and increase the traffic on which they can sell ads. But that friction isn’t always a bad thing, as Leth’s example shows. We all complain about our commenters, but there’s been relatively little discussion of the responsibility we should take when we direct them into someone’s private conversation. Given Twitter’s unwillingness to address harassment, media companies need to take care not to become enablers of it.

And of course, this brings us to the comments.

As an industry, our attitude on comments is often conflicted. We believe that they’re an important way for readers to reach us, and we certainly don’t complain about the extra time-on-page that they give to our metrics. But we also know, or at least suspect, that what NPR found is true: commenters are an outlier, in terms of size, viewpoint, and demographic makeup.

Lately, I’ve started to hear something more disturbing: reporters and photographers saying that sources have become reluctant to talk to the paper–or refused outright–because they’re uninterested in putting their name in front of our comments section. This is especially true for people from minority communities, who may already be wary of putting their names in print at the best of times.

Workflow as Design

Luckily, we don’t live in a world where all of our articles are edited by robots or written by readers (yet). It may be helpful to integrate thinking about “stress” cases into a wider newsroom process by comparing it to editorial standards that play a similar role, particularly around breaking news scenarios. For example, a crime desk may already have rules and guidelines for when the names and descriptions of suspects should be disclosed: say, when the crime is ongoing, or when people might be able to contribute to police efforts. These standards are already based on a judgement of when information is worth the stress that it may cause, but editors probably aren’t used to thinking about them this way.

One approach is to build limits into newsroom software in order to filter what will reach editors. Martin McClellan of Breaking News spoke briefly during the SRCCON session about how their digital teams handle tips from users while retaining some editorial control and initiative. In their “tipping” feature, readers can let editors and reporters know about a nearby news event, but can only describe it using emoji. By limiting input, and explicitly disallowing photo uploads, the team hoped to avoid both abuse and fake or counterfeit images, which regularly crop up on regular tiplines. Similarly, other participants highlighted the need to provide considerate design for newsroom-facing tools as well, particularly when editors and reporters may be writing about disturbing subjects.

Another proposed workflow strategy was increased newsroom diversity and inclusion. On the one hand, B. Cordelia Yu reported that creating non-male/white/wealthy reader personas led to better story pitches and writing from the newsroom. Likewise, Lauren Rabaino from Vox noted that in cases where controversial stories made it through the editing process, part of the problem was that warnings from minority staffers had gone unheeded. A team with more viewpoints, and more stress on listening to those viewpoints, would have prevented an embarrassing incident.

That said, participants were also split on whether editors and reporters can be too sensitive. In trying to avoid causing stress cases, is it possible to censor the topics or stories that come under consideration? What role does social media play, in terms of punishing the newsroom for perceived offenses? And in turn, how should news organizations direct attention on social media?

Conclusions and Further Reading

Discussion at SRCCON was lively, but it was only part of a larger conversation. In addition to tech industry discussion of Design for Real Life, the designers at NPR recently wrote about their efforts to integrate stress thinking into their work with 50 cases to consider for news.

At the end of the day, Facebook can leverage your social network to retain users, despite their design missteps. Newsrooms do not have that luxury–reader trust is fragile and easily betrayed, and competition is fierce. Newsrooms that work with product departments, or design products on their own, would do well to keep empathy in mind.

Credits

-

Thomas Wilburn

Thomas Wilburn

Thomas Wilburn is the Senior Data Editor for Chalkbeat, a nonprofit newsroom focused on education. Previously, he was a senior developer for the NPR News Apps team, and a founding member of the Seattle Times Interactives team.