Features:

Introducing MinnPost’s Election Night API

An API That Collects and Serves Election Results While Conserving Resources

For the past few years, on general and primary election night, MinnPost has published our Elections Dashboard, where readers can get live data on every single race across all of Minnesota. It’s consistently one of our most viewed pieces of the year. Behind the scenes, the Elections Dashboard has two main parts: the scraper-powered API and the front-end Dashboard.

We made this because, as a small, non-profit organization, we can’t afford AP or Reuters elections data feeds, and as a state-centric organization, we really want to focus on local, state, and national races in the context of Minnesota—results that aren’t always available in feeds from national organizations. To save money, we designed the app to run on big servers on election nights when traffic is really high but to be hosted cheaply on a third-party service afterward when demand for the data subsides. And finally, we were able to do this because the Minnesota Secretary of State’s Office offers a pretty good feed of data on election night.

Figuring that other organizations might have similar needs, we decided to use the time provided by the Code Convening to abstract our application to be used by others. Specifically, we focused on the API part of the application and the Election Night API was born. (Yes, its name is really mundane.)

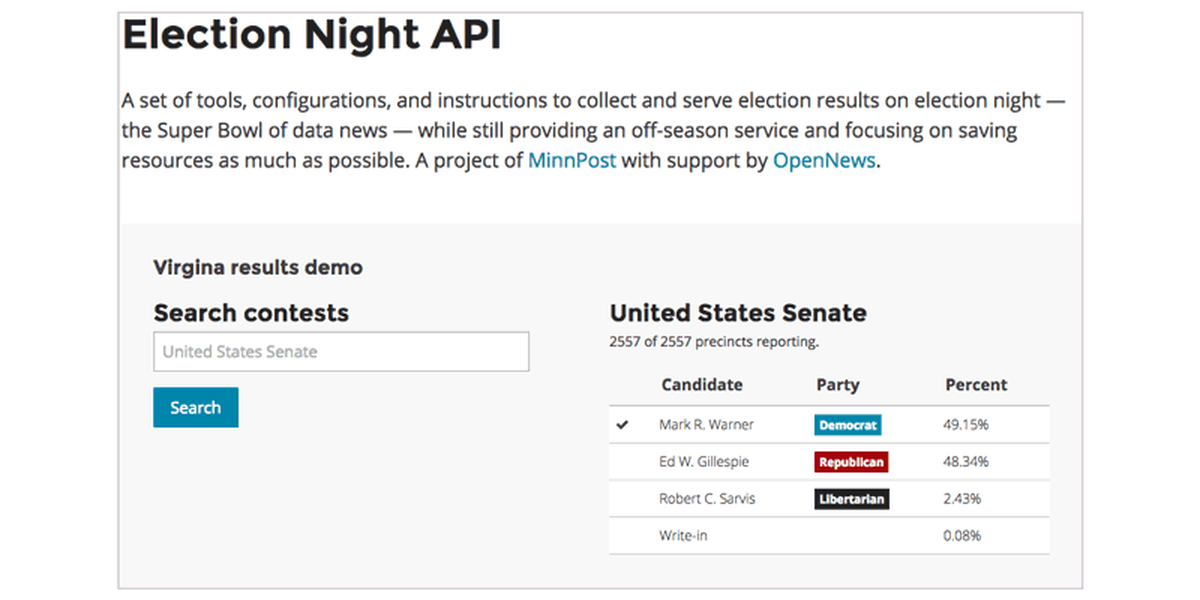

The Election Night API is a set of tools, configurations, and instructions to collect and serve election results on election night, while still providing an off-season service, and focusing on saving resources as much as possible.

Data Collection

The first thing the Election Night API needs is good election data. The API provides a command line utility for data collection. Since each state is different, when and how the data needs to be collected will vary.

Since we work with it every year, we have good data collection for MN (though it does have to be updated each year as different kinds of elections come up—ranked-choice voting, anyone?). Tom spent most of the Code Convening writing a scraper for Virginia election results as a proof-of-concept. The scraper mostly works, but would need to be fine-tuned by someone with knowledge of local elections to be usable on election night.

Code snipper:

# Get all the Virgina results into the database

$ ena VA results

# Get the Minnesota question text (separated

# out since it not needed to run often on election night)

$ ena MN questions

The data collected is very open-ended—only a small number of fields are required. This means you can add on any fields—and even tables—that are needed for your state and election. There is also an easy method to connect to a Google Spreadsheet and use data manually updated by your newsroom. This was useful for managing data related to ranked-choice voting in Minneapolis and St. Paul in 2013.

We are hoping to expand this tool to other states with help from journalists working in them. We may even be able to use another Code Convening project—Clarify from Open Elections—to more easily get data from states that use Clarity election software.

The API

To help keep the costs of running the Election Night API to a minimum, it is designed so that it can be moved from a dedicated server on election night—necessary to cope with high demand from readers—to the low-cost, even free, ScraperWiki platform, a powerful data collection service that provides an open SQL querying HTTP API. This means the API has no specific endpoints besides the query endpoint; for example:

Code snippet:

# Selects the last 10 contests

$ curl http://election-night-api.example.com/?q=SELECT * FROM contests LIMIT 10

There’s also a local development version of the API built as a Flask application; this should definitely not be used for production.

On election night, the Election Night API needs to be installed on its own server, specifically something with a significant amount of power (CPU, Memory, and disk I/O). We used AWS’ EC2. There are full instructions provided to get this up and running.

The Future

We really hope this can be useful for other newsrooms with similar needs: low cost and local results. The biggest job ahead is creating and maintaining the data collection tools for each state and election.

We also hope to make the tool easier to use and embrace it fully as a command-line utility; right now it’s a command-line tool and and a set of configurations. This would allow for easier instructions and more robust configuration.