Features:

Introducing autoEdit: Video Editing Made Better

A faster and easier way to edit video with an eye on story craft and collaboration

Making video editing easier with the power of transcription.

As a 2016 Knight-Mozilla fellow with Open News, I’ve been working with Vox Media’s product team and Storytelling Studio on tools for video producers. After spending a month doing internal user research, I decided to build an application that enables more efficient and accessible video editing of interviews.

This new Mac OS X desktop app, autoEdit, creates automatic transcription from a video or audio file. The user can then make text selections and export those selections as a video sequence, in the editing software of their choice.

With autoEdit, a process that once took days or weeks of work now takes just a few hours.

Hello, autoEdit

Overview of a typical use case.

Here’s a basic example of how to use autoEdit.

Step 1: Add video.

Step 2: Wait five minutes for an automated transcription.

Step 3: Explore the transcription and make some selections.

Step 4: Export the selections.

Step 5: Create a video sequence from the selections in the video editing software of your choice, such as Premiere.

See the user manual or this video demo for more details.

No Scissors or Tape Required

The core function of autoEdit is based on the time-honored concept of the paper edit, something that video editors have done for decades. To make a paper edit, you select lines of text from the transcriptions of your documentary’s interviews—which means you need full transcriptions beforehand. Then you arrange these, putting them in an order that you’d like to see on film. It works well, but is very time time consuming.

I was initially introduced to the idea of paper editing while doing an MA in Documentary Films at LCC in London. We learned to do an "analog paper edit” using paper, scissors, and tape (similar to the one I taught in this workshop).

The idea stuck with me, and I tried a bunch of workflows and prototypes, which worked for me but weren’t as useful as a paper edit at accomplishing a few core things.

With autoEdit, users can replicate much of what’s useful about a paper edit, but in much less time. And like a paper edit, autoEdit positions itself right at the collaboration point between an edit/video producer and a video editor, and can smooth the communication/collaboration process.

So why use autoEdit? Three reasons.

It’s a Tool Everyone Can Use

When talking to journalists in newsrooms, one recurring need kept coming up: they need tools that require less training and are easier to use.

With autoEdit, we’re not trying to replace video editing software. Instead autoEdit allows editorial staff without knowledge of video editing software to produce a “rough cut” video sequence by selecting the text of the transcriptions. Then the video can be refined later in traditional editing software.

Why take this approach? Video editing software has a steep learning curve. Furthermore, video editing software is not designed to help with story crafting. Instead it’s made to aid the cutting of video and audio as segments, with no semantic insight into the content. However, editorial staff has great insight into the crafting of the story they are going to tell. This tool allows them to take that further—into the editing of the video—by creating a rough cut without having to open complicated editing software. (Depending on the use case, though, this rough cut might actually end up being the final output, if you are just looking for a few sound bites or quotes for Twitter or Facebook.)

It Encourages Better, Faster Collaboration

Normally, an edit producer provides a paper edit to a video editor to assemble as a video sequence, and a video editor collaborates with an edit producer on strengthening the story structure by providing feedback on the paper edit.

With autoEdit, you can export an Edit Decision List, or EDL, therefore removing the tedious part of reconnecting the sequence manually for the video producer. The current version of autoEdit also allows us to export the corresponding time-coded text of the paper cuts/paper edit. Because this is such a quick and seamless operation, it’s easier to get feedback and iterate on changes. (Future versions of the tool could see a web-based app that would allow multi-user collaboration, Google Doc-style.)

All of this allows for better story crafting, both at the dialogue level and the level of the overarching story structure. It’s also great for getting better, more actionable feedback. If you are working for an executive or an external client, getting feedback at an early stage saves time and money. Showing the paper edit text and a video sequence side-by-side generally means you won’t be asked to change as much at the end of the project. Showing a rough cut made in autoEdit accomplishes the same thing.

It Puts the Focus on Crafting a Great Story

Paper editing—and now, autoEdit—allows you to concentrate on the crafting of your story. This follows screenwriting teacher Robert McKee’s idea of writing “from the inside out” as opposed to the outside in.

Writing from “the outside in” looks like this, according to McKee:

The struggling writer tends to have a way of working that goes something like this: He dreams up an idea, noodles on it for a while, then rushes straight to the keyboard.

But writing from “the inside out” looks like this:

Successful writers tend to use the reverse process…. writing on stacks of three-by-five cards: a stack for each act—three, four, perhaps more…. Using one- or two-sentence statements, the writer simply and clearly describes what happens in each scene, how it builds and turns….

With its ability to export selections in a particular order, and move them around, autoEdit helps editors achieve an “inside out” level of storytelling.

Future versions of autoEdit will go even further.

autoEdit vs. Other Tools

In the tools section of my website, you can see other projects that take a different approach to a similar problem of getting an insight into video through transcriptions. Most of these apps focus on slightly different use cases, although some are in the same or similar problem domain of using the text of transcription to get a way into the video. Our use case focuses on factual documentary video production.

Building autoEdit at Vox

When I first started prototyping the concept for autoEdit, I was working on a series of short documentaries for the BBC Academy. First I started to experiment with a “digital workflow” using Excel. By optimizing the post-production stage of making a rough cut, or what professionals sometimes refer to as a "radio edit,” it took down the post-production time from three weeks to three hours.

That’s when I knew I was onto something.

Later as a Knight-Mozilla Fellow at Vox, I was able to build on everything I’d learned and already tried. Vox Media’s brands (The Verge, Vox.com, SB Nation, Eater, Polygon, Racked, Curbed, Recode) provided a great variety of use cases when it comes to video production. Some brands have experienced video teams with documentary filmmaking backgrounds, while others are more focused on YouTube explainers. Teams have a variety of different experience levels and configurations. For example, sometimes journalists and a studio team work together to make videos until a more dedicated video team can be recruited.

What appealed to me the most about Vox, coming from a TV documentary background, is everyone’s flexibility and curiosity about trying new things during the production process. And being supported by its storytelling studio team for code reviews, design, project management, user testing and general feedback helped me to refine my product development process.

How I Worked

I started with user research, keeping an open mind to identify pain points in their video production. I decided to use a lean approach that was hypothesis-driven and relied on frequent iteration. I worked hard on modeling the problem domain because, as Russel Winder says: “If you are able to capture the problem domain as the core of the design of your program, then the program code is likely to be more stable, more reusable and more easily adaptable to specific problems as they come and go.” (Developing Java Software, 3rd Edition, 2006)

I also followed a component-based design approach to build the backend of the application. That meant working on key components in isolation and making sure they worked with the provided input and returned the expected output before combining them.

Then the paper prototype of the front end came first, aiming to get across features and the user journey, without being concerned about UI. Next, a Vox designer got involved in the process to refine the UX/UI.

About the Text-to-Speech API

Manual transcription is a time-intensive process that takes three to four times the length of the media. Third-party services that provide manual transcription generally require a 24-hour turnaround time and are often costly. In comparison, autoEdit generates a transcript in five minutes regardless of media length. This frees up time and resources for video producers and ensures they can focus on what story to tell out of the transcription.

I considered several text-to-speech APIs including Google Speech, Rev.com, and Spoken Data, but I decided to use IBM Watson, as I found the documentation and API reference very easy to get up and running with a clear price structure, while I couldn’t get my head around the documentation of the Google one. Microsoft’s speech API is claiming close to human accuracy, but I could not find an up-to-date API that supported transcribing more than two minutes of audio to try it out.

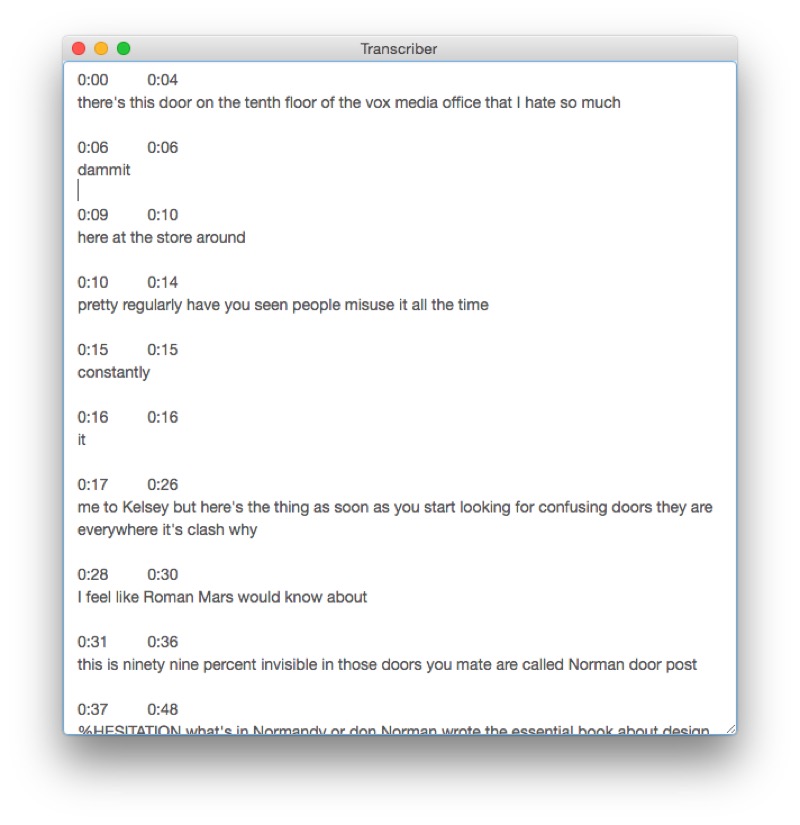

I did want to make sure that IBM Watson met the minimum acceptability threshold by the editorial staff before building a tool on top of that service. So I built and user-tested a small “Transcriber” app that, when given a video or audio file, would return the text of the transcription from IBM STT Service.

Although journalists turned out to prefer manual transcription, rather than editing the generated transcripts, video producers were more than happy with it.

One piece of feedback received at this stage from the video producer was to add timecodes to the text to make it easier to find corresponding video and to use it for paper editing within Google Docs.

I added the timecode feature and forgot about it while I started working on the second iteration.

The editorial product team then received the following feedback from one of the video producers.

“… I just wanted to say thank you so much. It’s great. It will measurably improve our workflow and general well-being. You all are brilliant.”

Joss Fong, Senior Editorial Producer, Vox.com

This feedback showed we were onto something, and it further confirmed my hypothesis: that they accepted the quality of the automated STT, and it was good timing to introduce the next iteration of the tool. It was also great to get more buy in from the editorial products team/storytelling studio.

More autoEdit Features

Multi-Language Capabilities

As reaching global audiences becomes increasingly important, media outlets are beginning to produce content in languages other than English. However, as with transcription, translation services can create a bottleneck. With that in mind, the following languages are currently supported using IBM Watson: English US and UK; French; Spanish; Japanese; Modern Standard Arabic; Brazilian Portuguese; and Mandarin Chinese.

Offline Options

Using autoEdit, a transcription can be generated locally on a computer without the need for an internet connection. I prioritized this feature because I believe the most interesting documentaries are those at the edge case of video production. Maybe the material you are working with is so sensitive that you don’t want to upload it to a third-party server. You might be in a remote location with slow (or no) internet connection. Or you are on a long flight back from a reporting trip and want to get started while everything is still top of mind.

Captioning and Export Support

In the era of autoplay social media videos, empirical tests show that captioned videos increase the number of views. Captions are also critical to ensure accessibility. Through autoEdit, users can export a caption SRT file of the video in addition to transcriptions.

Once you have proofed your captions for accuracy, it is also possible to use another tool I made to burn them onto a video file without having to use video editing software. This was originally a prototype hypothesis to speed up the captioning workflow.

What’s Next for autoEdit

The third release will allow users to bring selections from multiple transcriptions into a story outline. Eventually a video preview of the story selection will also be possible in order to shorten the feedback loop.

The project is open source as well as freely available.

Check out the project page, or download the latest stable release, and see the user manual to find out how to set up the speech-to-text system before getting started. Dive into the documentation to learn more.

The project is relatively stable and has been through QA by Vox’s internal team, but if you see an issue or find a bug feel free to raise it here.

If you’d like to get involved in the development, this is also where you can find some interesting issues to tackle. We are also using the “Trello style” waffle.io board to keep the GitHub issues organized.

Some folks have also shown interest in refactoring the open source forced aligner Gentle into a standalone module that could be self contained and return the transcriptions it creates as a byproduct of the alignment. More info and a proposed solution are in this Google Doc.

If you have any questions, you can reach me at pietro@autoedit.io or find me on Twitter: @pietropassarell

Upcoming event

If you are a developer interested in this problem domain of using transcriptions as a way into the video, you might also be interested in a transcription video event I am organizing with Open News. Sign up to be kept in the loop.

Last but not least

Last but not least, I’d like to thank the folks at the Vox product and storytelling studio team, without whom this project would have not been possible. I’d also like to thank the video producers across the various Vox Media brands who have been eager to try and get involved in field testing early releases of the software, providing invaluable feedback.

Organizations

Credits

-

Pietro Passarelli

Pietro Passarelli

Pietro Passarelli is a software developer and documentary filmmaker. He is passionate about projects that sit at the intersection between software development and video production, both in terms of the growing trend of interactive documentaries but also as tools for making video production and post-production easier, such as autoEdit. While working in broadcast documentaries for BBC and C4 Pietro noticed the convergence of video production and software development and did an MSc in Computer Science at UCL. He worked as a newsroom developer at the Times and Sunday Times where he developed quickQuote, an open-source project to make it easier and faster for journalists to identify and create an interactive video quote. While at Vox Media as one of the 2016 Knight-Mozilla fellows, he worked with the product team and storytelling studios on autoEdit to make video production faster, easier and more accessible across the Vox Media brands. He is currently a senior software engineer at The New York Times.